Principles for Ethical AI Policy with Stakeholder Input

9 Feb 2026

Practical guidance for UK SMEs on ethical AI: fairness, transparency, accountability, risk management and stakeholder‑informed policies.

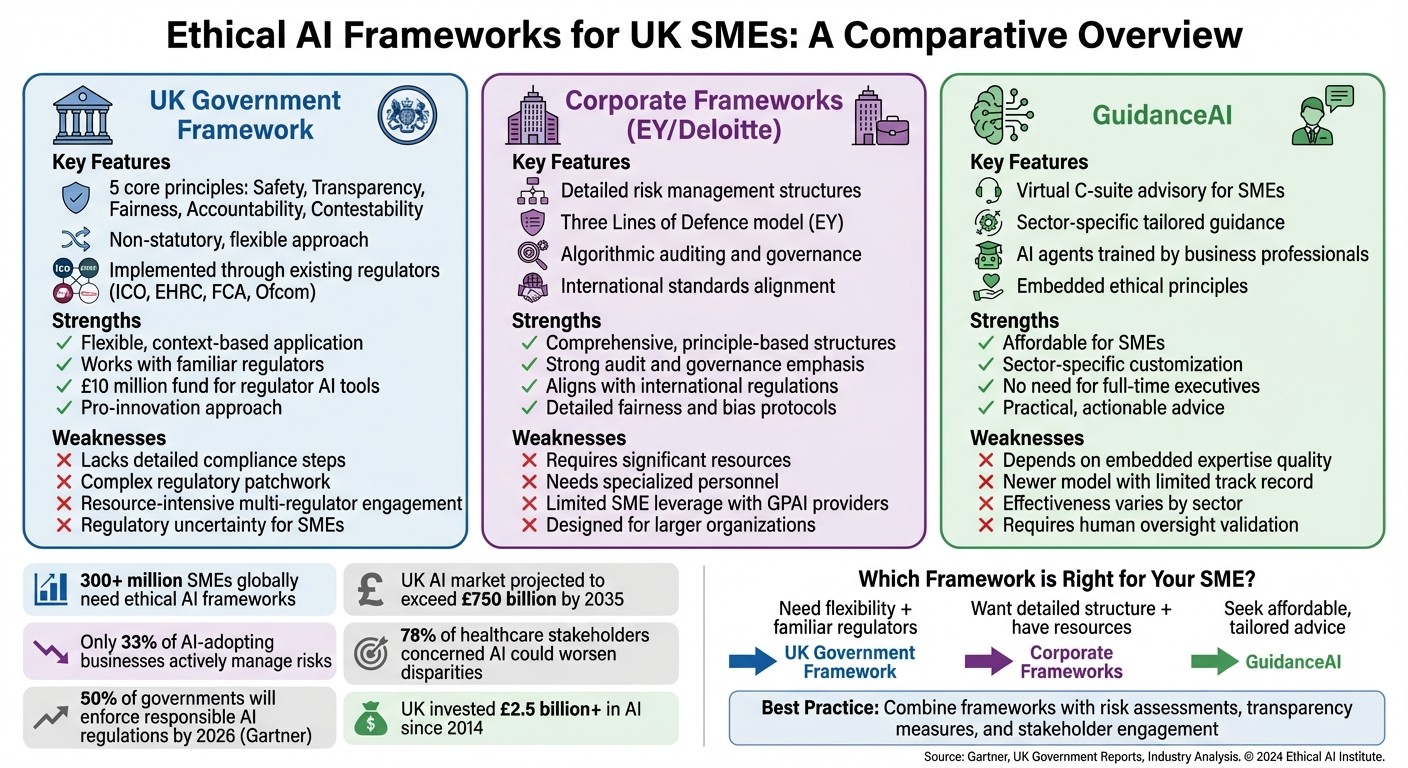

Small and medium-sized enterprises (SMEs) face challenges in adopting artificial intelligence (AI) due to limited resources and expertise. With over 300 million SMEs globally, the need for ethical AI frameworks is critical to avoid risks like data breaches, discriminatory outcomes, or regulatory penalties. Here's what you need to know:

UK Government's AI White Paper: Focuses on flexible principles like safety, transparency, and accountability, implemented through existing regulators. However, it lacks detailed compliance guidance, leaving SMEs uncertain.

Corporate Frameworks (EY and Deloitte): Offer detailed structures for managing AI risks, including fairness checks and governance models. These frameworks, however, often require significant resources, which may be challenging for SMEs to manage.

AgentimiseAI's GuidanceAI: Provides tailored, sector-specific leadership advice for SMEs, helping integrate ethical AI without the need for full-time executives. Its effectiveness depends on the quality of expertise embedded within the platform.

Quick Comparison

Approach | Strengths | Weaknesses |

|---|---|---|

UK Government Framework | Flexible guidance, works with familiar regulators, funding for regulator tools | Lacks detailed compliance steps, regulatory complexity can overwhelm SMEs |

Corporate Frameworks | Detailed risk management and governance models | Resource-heavy, limited leverage for SMEs in contracts with AI providers |

GuidanceAI | Affordable, tailored advice for SMEs | Relies on embedded expertise, newer model with limited track record |

To succeed, SMEs need to combine these frameworks with practical steps like risk assessments, transparency in AI processes, and stakeholder engagement. With the UK AI market projected to exceed £750 billion by 2035, ethical readiness is a key driver for growth and trust.

Comparison of Ethical AI Frameworks for UK SMEs: Government, Corporate, and GuidanceAI Approaches

1. UK Government White Paper Principles

The UK Government's AI White Paper outlines a framework aimed at promoting innovation while addressing key concerns. It is built around five main principles: safety, security and robustness; appropriate transparency and explainability; fairness; accountability and governance; and contestability and redress. Unlike the European Union's AI Act, which introduces binding regulations, the UK has opted for a more adaptable, non-statutory approach. Existing regulators, such as the Information Commissioner's Office (ICO) and the Equality and Human Rights Commission (EHRC), are tasked with interpreting and applying these principles within their respective domains. This approach aims to encourage innovation, particularly for smaller businesses, by avoiding overly rigid legislation.

Fairness and Bias Mitigation

The fairness principle underscores the importance of ensuring that AI systems do not produce discriminatory outcomes or infringe on human rights, as outlined in the Equality Act 2010. For small and medium-sized enterprises (SMEs), this means conducting equity impact assessments during the design phase and auditing training datasets to ensure diverse representation. The potential risks are clear: 78% of healthcare stakeholders have voiced concerns that AI could worsen disparities in healthcare if fairness is not prioritised. Since 2014, the UK Government has invested over £2.5 billion in AI and recently introduced a £10 million initiative to strengthen regulators' AI capabilities. Despite these investments, SMEs face a skills gap, with only 21% of the public feeling confident in their ability to use AI in daily life. Addressing fairness naturally leads to the need for robust accountability measures, which is the focus of the next principle.

Accountability and Governance

Accountability is a cornerstone of the framework, requiring that each major AI process has a designated senior owner. SMEs are encouraged to appoint a board-level member as the Senior Responsible Officer (SRO) to oversee AI systems, ensuring they are auditable, answerable, and equipped with safeguards against unintended harm. Instead of creating separate processes, SMEs are advised to integrate AI risk management into their existing corporate risk registers and compliance systems, with formal reviews conducted at least quarterly. Tools such as Data Protection Impact Assessments (DPIAs) and Equality Impact Assessments (EIAs) are recommended before deploying new AI systems.

Transparency and Explicability

Transparency is another key principle, requiring organisations to clearly explain how AI systems make decisions, the significance of these decisions, and their potential impact on individuals. To achieve this, the government advocates using the Algorithmic Transparency Recording Standard (ATRS) to document the operations of AI tools. Michelle Donelan MP highlighted the importance of balance:

"A heavy-handed and rigid approach can stifle innovation and slow AI adoption. That is why we set out a proportionate and pro-innovation regulatory framework".

For SMEs, this principle involves tailoring explanations to suit the audience. For instance, consumer-facing interfaces should use plain English, while internal teams maintain detailed technical logs for accountability. The ICO has emphasised that "technical complexity cannot excuse failing to meet transparency obligations". In February 2024, UK Research and Innovation (UKRI) allocated £19 million to projects focusing on "trustworthy AI". Transparency also lays the groundwork for effective risk management and contestability.

Risk Management and Contestability

The contestability principle ensures that individuals have the means to challenge harmful or incorrect AI-driven decisions. For SMEs, this involves setting up processes that allow users to question automated outcomes, supported by human oversight for high-stakes decisions. The framework adopts a context-sensitive approach, meaning governance should align with the specific risk and application of the AI system. SMEs are also encouraged to maintain detailed audit trails to document decision-making logic, enabling board members to provide oversight and address concerns raised by stakeholders or regulators. While the framework is currently non-statutory, the government has indicated that a statutory duty for regulators may be introduced if the initial phase proves insufficient. This flexible, sector-specific approach aims to set a practical standard for AI governance while leaving room for adaptation.

2. Corporate Frameworks (EY and Deloitte)

Building on government guidelines, corporate frameworks provide practical, detailed models for SMEs to navigate ethical AI implementation. Consultancies like EY and Deloitte offer structured approaches tailored to SME needs, each with its unique focus. These frameworks complement broader government principles by providing actionable, process-oriented strategies.

Fairness and Bias Mitigation

EY takes a comprehensive approach to fairness, categorising AI use cases into risk levels based on ethical, legal, and privacy factors. This classification determines the frequency of reviews and the necessary controls, such as assessing HR algorithms for demographic bias. EY also stresses the importance of examining datasets for imbalances before training, aiming to reduce both statistical and social biases. As EY explains:

"Fairness is at the core of our values and purpose. We take proactive measures to identify and mitigate bias throughout the lifecycle to help prevent the perpetuation of unfair and discriminatory AI systems".

Deloitte focuses on addressing challenges SMEs face with General Purpose AI (GPAI) systems. They highlight the risks associated with GPAI, particularly as many providers limit their liability for discriminatory outcomes. Deloitte recommends increasing human oversight and conducting algorithmic audits. This aligns with concerns from 67% of business leaders who believe more needs to be done to tackle the social and ethical risks of AI.

Next, let’s look at how accountability is managed.

Accountability and Governance

EY employs a "Three Lines of Defence" model to ensure clear accountability. In this framework, technical teams directly manage risks (first line), functional teams oversee and review portfolios (second line), and independent IT risk or audit teams provide accountability (third line). This structure includes a formal AI portfolio intake process that evaluates use cases based on technical complexity, business value, and ethical risks.

Deloitte highlights the role of boards and senior management in navigating regulatory differences through targeted training and briefings. They stress the importance of mechanisms for contestability and redress, ensuring AI-driven decisions can be challenged. With Gartner predicting that 50% of governments will enforce responsible AI regulations by 2026, Deloitte underscores the need for strong oversight to meet these growing expectations.

Now, we turn to transparency and explicability.

Transparency and Explicability

EY separates transparency from explainability. Transparency involves sharing details like the purpose, design, training data, and limitations of AI systems. Explainability, on the other hand, focuses on helping users understand the decision-making process so they can challenge or validate outcomes. EY suggests making technical documentation and user instructions easily accessible to support this.

Deloitte takes a slightly different approach, grouping transparency and explainability into a single principle aligned with the UK Government's framework. They recommend SMEs deploying GPAI systems review provider contracts carefully to identify exclusions or caps on liability for discrimination. The UK Government has also allocated £10 million to help regulators like the FCA and Ofcom develop tools such as algorithmic forensics and auditing.

Lastly, let’s dive into risk management strategies.

Risk Management and Contestability

EY combines proactive and retroactive measures to manage risks. Proactive steps include scanning for toxic language during development, while retroactive monitoring helps identify emerging risks or biases after deployment. EY also advises conducting adversarial red teaming exercises to uncover vulnerabilities before launching AI systems.

Deloitte prioritises contestability, advocating for clear procedures that allow users to challenge AI outcomes. Forrester predicts that by 2025, 40% of highly regulated enterprises will integrate their data and AI governance functions, reflecting the increasing complexity of managing rapid advancements. SMEs can also turn to resources like the AI and Digital Hub, a multi-regulator advisory service designed to guide innovators through legal and regulatory requirements before launching products.

3. AgentimiseAI's GuidanceAI

GuidanceAI weaves ethical AI principles into leadership advisory services tailored for UK SMEs, offering virtual C-suite advice that’s both practical and personalised. By pairing founder-led businesses with specialised AI agents trained by seasoned business professionals, the platform tackles the real-world challenges of integrating ethical AI into decision-making processes. Let’s explore how GuidanceAI approaches fairness, accountability, and transparency in a way that resonates with UK SMEs.

Fairness and Bias Mitigation

Building on frameworks from the UK Government and leading corporations, GuidanceAI takes fairness a step further by adapting its guidance to the specific needs of individual sectors. Rather than relying on one-size-fits-all principles, it ensures fairness is embedded right from the design phase, addressing the unique demands and risks of different industries.

This sector-focused strategy helps minimise discrimination by directly incorporating fairness goals into leadership advice. Additionally, the platform provides clear fairness criteria upfront, showing users how outcomes are distributed across various leadership scenarios. This ensures that AI-driven guidance remains equitable and aligned with the diverse challenges faced by UK SMEs.

Accountability and Governance

GuidanceAI ensures accountability by positioning its AI agents as virtual advisors, not decision-makers. While these agents offer leadership-level insights, ultimate responsibility stays firmly with human leaders. This setup mirrors traditional boardroom practices, giving founder-led SMEs the benefits of AI-enhanced advice without compromising established governance norms.

For smaller businesses that may lack full-time senior executives, GuidanceAI fills the gap by offering boardroom-quality guidance. The AI agents, trained by experienced business professionals, deliver advice grounded in proven expertise. This ensures that recommendations are informed by human insight, not just algorithmic calculations.

Transparency and Explicability

Addressing the "black-box" issue often associated with AI, GuidanceAI focuses on making decision-making processes clear and understandable. The platform explains how AI contributes to outcomes, ensuring both technical and non-technical stakeholders can follow the logic behind its recommendations. This is especially critical in high-stakes situations where founders need to justify strategic decisions to boards or investors. By demystifying AI’s role, GuidanceAI builds trust and confidence in its guidance.

Strengths and Weaknesses

Different ethical AI frameworks come with their own set of advantages and challenges for UK SMEs. The UK Government's framework offers a flexible, pro-innovation approach, aiming to avoid "placing undue burdens on businesses". However, its lack of specific compliance guidance can leave smaller firms uncertain about how to proceed. On the other hand, corporate frameworks from firms like EY and Deloitte provide detailed, principle-driven structures, but these are often tailored for larger organisations with dedicated compliance teams. GuidanceAI attempts to fill this gap by offering sector-specific, high-level advice, eliminating the need for full-time executives. That said, its success hinges on the quality of the human expertise it incorporates.

Here's a breakdown of the key strengths and weaknesses of each approach for UK SMEs:

Approach | Key Strengths for SMEs | Key Weaknesses for SMEs |

|---|---|---|

UK Government White Paper | Offers flexibility with context-based application; works with familiar regulators like ICO and CMA; includes a £10 million fund to strengthen regulator AI capabilities | Lacks clear, step-by-step compliance guidance; creates regulatory uncertainty due to a "complex patchwork" of requirements; engaging with multiple regulators can be resource-intensive |

Corporate Frameworks (EY/Deloitte) | Provides detailed, principle-based structures; aligns with international regulatory standards; places strong emphasis on audit and governance | Requires significant resources and specialised personnel; SMEs often have limited leverage when negotiating terms with GPAI providers, potentially leading to unfavourable liability conditions |

GuidanceAI | Customised for specific sectors and SME workflows; delivers high-level advice without needing permanent executives; developed by experienced business professionals | Depends heavily on the quality of embedded human expertise; effectiveness varies across sectors; as a newer model, its track record is still emerging |

Feedback from the UK government's consultation - featuring 409 responses - underscores industry concerns about the lack of unified AI legislation. Valeria Gallo from Deloitte highlights a key challenge:

"A highly specialised, concentrated market of GPAI providers can make it challenging for smaller organisations... to negotiate effective contractual protections".

This issue sheds light on why only about one-third of businesses adopting AI are actively managing risks, leaving many others unprepared.

The reliance on multiple regulators can lead to conflicting interpretations, creating further complications. Corporate frameworks, while thorough, often require resources that SMEs simply cannot afford, such as dedicated ethics teams and legal departments. GuidanceAI, by embedding fairness, accountability, and transparency into its tailored advice, offers a more accessible middle ground. However, SMEs still need to bolster this approach with human oversight of AI outputs and careful contract evaluations to limit liability risks.

With over 300 million SMEs worldwide, there's a pressing need for practical, actionable guidance that fits their specific risk levels and resources. Whether through government initiatives like the AI and Digital Hub, international standards such as ISO/IEC 42001, or platforms like GuidanceAI, finding an approach that balances risks and resources is essential.

Conclusion

Creating ethical AI policies that truly benefit SMEs requires more than just high-level principles - it demands practical, sector-specific guidance. The UK Government's framework provides flexibility, while corporate models offer detailed structures. Platforms like GuidanceAI bring accessible advice tailored to SMEs, though their effectiveness hinges on the depth of expertise they offer. This lays the groundwork for strategies that are both actionable and informed by stakeholder needs.

Engaging stakeholders is the key to turning frameworks into workable policies. A prime example comes from the European Commission's High-Level Expert Group, which in June 2019 tested ethical guidelines through 50 detailed interviews and various surveys. This process helped refine abstract ideas into concrete, actionable requirements. Without this kind of inclusive input, SMEs risk adopting superficial measures that fail to address deeper governance issues.

SMEs can build on these frameworks by aligning with international standards and conducting focused risk assessments. For instance, adopting standards like ISO/IEC 42001 can save time and effort by using established guidelines. Moving from general principles to specific risk assessments allows businesses to pinpoint threats unique to their operations. Transparency is another essential step - clearly informing users when they interact with AI systems builds trust. Regular ethics training for employees further reinforces these efforts. With only a third of SMEs worldwide actively managing AI risks, there’s a significant opportunity for those willing to lead the way. Platforms like GuidanceAI address these gaps by offering leadership-focused advice that integrates ethical principles into the unique challenges of founder-led businesses.

The UK AI market is projected to grow to over US$1 trillion (around £750 billion) by 2035, presenting enormous opportunities for SMEs that can show ethical readiness. Whether through government frameworks, international standards, or accessible advisory platforms, the goal is to balance risk management with available resources. By doing so, SMEs can gain the trust of customers, investors, and regulators, setting themselves up for long-term success in an AI-driven economy.

FAQs

How can small businesses adopt ethical AI on a budget?

Small and medium-sized enterprises (SMEs) can embrace ethical AI practices without breaking the bank by taking practical, manageable steps. For starters, explore free or affordable tools designed to identify and minimise bias in AI systems. Keep governance straightforward by maintaining basic records and provide your team with clear, easy-to-follow guidelines on using AI responsibly. These small actions can go a long way in reducing risks and promoting ethical behaviour.

SMEs can also look to frameworks like the UK’s Data and AI Ethics Framework. This resource lays out principles around transparency, fairness, and accountability that can be adjusted to fit your business. Taking a phased approach works well - start with basic ethical measures and expand efforts as your resources grow. The goal isn’t to achieve perfection but to implement thoughtful, proportionate practices that align with your company’s needs and capabilities.

How do the UK Government's AI policies differ from corporate AI frameworks?

The UK Government has introduced policies like the Data and AI Ethics Framework to establish broad principles for responsible AI development across industries. These principles focus on key areas such as safety, transparency, fairness, and accountability. The goal is to strike a balance between encouraging innovation and maintaining ethical oversight. To achieve this, the government supports initiatives like regulator guidance and the creation of the National Data Library, aiming to provide a regulatory environment that fosters growth while ensuring proper monitoring.

On the other hand, corporate AI frameworks are designed to meet the specific needs of individual organisations. These frameworks emphasise internal governance and operational standards, often focusing on mitigating risks, ensuring compliance, and building trust with stakeholders. While they align with government principles like fairness and transparency, corporate policies are more focused on practical application, tailored to achieve business objectives and address day-to-day operational challenges.

Government frameworks provide overarching guidance to promote responsible AI across various sectors. In contrast, corporate frameworks dive deeper into organisation-specific details, addressing operational risks and ethical practices in a way that aligns with business priorities.

How does GuidanceAI promote fairness and transparency in AI for SMEs?

GuidanceAI focuses on embedding ethical principles into every step of AI development and deployment to promote fairness and openness. This includes offering clear, easy-to-understand explanations for AI decisions, creating systems that avoid bias, and engaging stakeholders to tackle ethical challenges head-on.

By emphasising transparency, accountability, and inclusivity, GuidanceAI enables SMEs to create AI systems that people can trust. These systems are designed to reflect societal values and promote responsible choices, ensuring they work not just effectively but also fairly for everyone.