AI Literacy Checklist for Executives

19 Feb 2026

Executives must master AI literacy—align strategy, data, people, processes and governance to turn AI into measurable business value.

AI literacy is no longer optional for executives. Here's why:

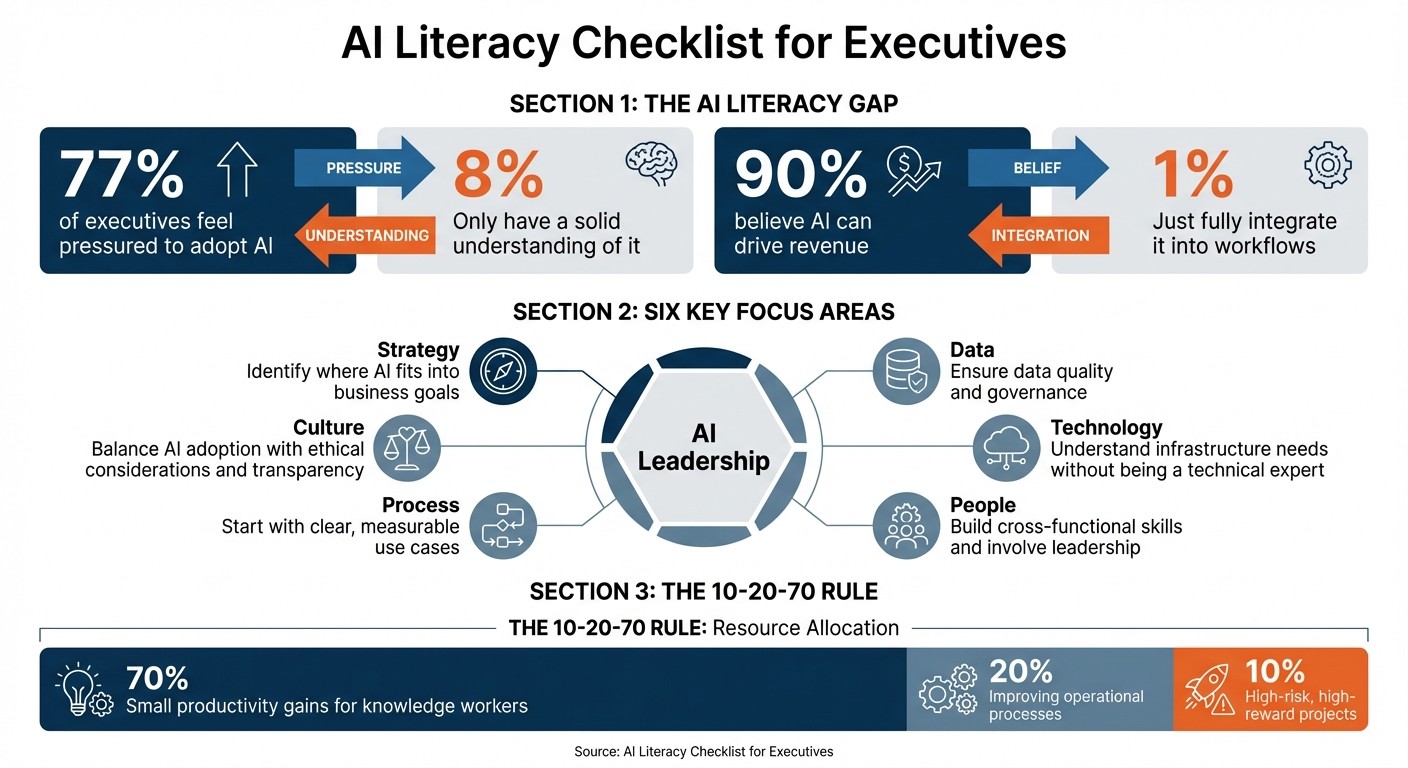

77% of executives feel pressured to adopt AI, yet only 8% have a solid understanding of it.

90% believe AI can drive revenue, but just 1% fully integrate it into their workflows.

For smaller businesses, low AI knowledge directly impacts agility and decision-making.

This checklist focuses on six areas to help executives lead AI initiatives effectively:

Strategy: Identify where AI fits into business goals.

Data: Ensure data quality and governance.

Technology: Understand infrastructure needs without being a technical expert.

People: Build cross-functional skills and involve leadership.

Process: Start with clear, measurable use cases.

Culture: Balance AI adoption with ethical considerations and transparency.

To succeed, executives must move beyond delegating AI decisions. Instead, they should understand core concepts, avoid buzzwords, and focus on practical applications that deliver measurable results.

AI Literacy Gap Among Executives: Key Statistics and Six Focus Areas

AI's Role in Business Strategy

How AI Supports Your Business Objectives

AI strategy isn't just about deciding whether to invest - it’s about figuring out where and how much to invest to strengthen your competitive edge. For founder-led SMEs, this means looking at AI as a tool for long-term advantage rather than simply jumping on the latest trend.

To start, review what sets your business apart. In industries where success hinges on agility and data-driven decisions, disruption is happening fast. If your competitive advantage relies on delivering quality insights or scaling personalisation, AI moves from being a nice-to-have to a must-have.

The best way forward? Take a workflow-centric approach. Map out your processes in simple, clear terms. If a task is well-documented and repeatable, it’s a prime candidate for AI automation. Stéphane Bancel, CEO of Moderna, offers a great example:

"We're looking at every business process - from legal to research, to manufacturing, to commercial - and thinking about how to redesign them with AI".

When allocating resources, consider the 10-20-70 rule: dedicate 70% of your efforts to small productivity gains for knowledge workers, 20% to improving operational processes, and 10% to high-risk, high-reward projects. This balanced approach ensures immediate wins while leaving room for strategic innovation.

But here’s the kicker - leadership buy-in is the single biggest factor in AI success. Without active executive support and a shared vision, even the most technically sound projects can fizzle out during the pilot phase. A committed leadership team can help turn isolated experiments into scalable success.

This balanced approach to resource allocation and leadership involvement sets the stage for identifying AI use cases that deliver measurable results.

Finding AI Use Cases That Deliver ROI

A 2025 study revealed that 95% of AI investments fail to show measurable returns due to poor measurement frameworks. The fix? Start by benchmarking your current performance before rolling out AI.

An impact-effort prioritisation matrix is a practical tool here. Plot potential AI projects based on their business value and implementation difficulty. Focus first on "Quick Wins" - those high-impact, low-effort opportunities - to build momentum and gain executive trust. Early successes can pave the way for tackling more complex initiatives.

For instance, in 2025, a mid-sized insurance company invested £640,000 in six small automation projects, including document classification and fraud detection. The result? Gross annual savings of £2.56 million and a net first-year saving of £2.08 million. The key was targeting repetitive, high-volume tasks.

There are three main areas to explore for AI opportunities:

Repetitive low-value tasks: Automate mundane work to free up time for higher-priority activities.

Skill bottlenecks: Use AI tools to overcome gaps in expertise or capacity.

Complex decision-making: Apply AI to analyse data and support better decisions.

Take Promega, for example. In 2024, their marketing team saved 135 hours in just six months by using ChatGPT Enterprise to draft email campaigns and convert copy into paid ads. Similarly, Poshmark’s CFO, Rodrigo Brumana, used AI to generate Python code, automating the reconciliation of millions of spreadsheet rows for performance reports and accounting memos.

The real value of AI lies in what you do with the time it saves. Simply saving three hours a week won’t mean much unless that time is reinvested into activities like increasing sales proposals, speeding up project timelines, or refining strategies. As Claire Vo, Chief Product and Technology Officer at LaunchDarkly, puts it:

"Every time I do something I find annoying, I ask myself, how can I not have to do this again?".

To avoid wasted efforts, focus on pilots with clear executive backing and well-defined plans for scaling into production. These examples provide a practical guide for assessing your current AI initiatives and ensuring they deliver tangible results.

Basic AI Concepts for Non-Technical Leaders

Core AI and Machine Learning Principles

You don’t need to be a data scientist to lead AI initiatives effectively, but understanding the basics is essential. Artificial Intelligence (AI) refers to machines performing tasks that typically require human intelligence, like reasoning, learning, or problem-solving.

Machine Learning (ML), a subset of AI, uses algorithms to identify patterns and make predictions by processing data instead of relying on pre-written rules. For instance, ML can predict customer churn by analysing thousands of past cases rather than coding each scenario manually.

Deep Learning (DL) takes ML further by using multi-layered artificial neural networks to handle massive amounts of unstructured data. A good example is GPT-3, which operates with up to 96 layers in its architecture.

These distinctions are more than academic - they impact your strategy. Deep Learning requires significantly more computing power and data than standard ML, which can influence both infrastructure and budget planning. For example, training deep belief networks on GPUs can be over 70 times faster than CPUs. Most businesses today rely on Narrow AI, which is designed for specific tasks like spam filtering or recommendation engines. On the other hand, Artificial General Intelligence (AGI) - machines with human-like cognitive abilities - remains a theoretical concept.

Another critical challenge is the "black box" problem in Deep Learning. These models often make decisions in ways that are hard to interpret. For high-stakes applications, prioritise explainable AI to ensure accountability and maintain trust. By grasping these fundamentals, you’ll be better equipped to filter meaningful insights from industry noise.

Separating Buzzwords from Useful Knowledge

Once you understand the basics, the next step is cutting through the buzzwords to focus on what matters. Here’s the bottom line: data is the lifeblood of AI. Without high-quality, often labelled data, even the most advanced algorithms will fail. Before diving into complex Deep Learning technologies, invest in strong data governance.

It’s also important to distinguish Robotic Process Automation (RPA) from AI. RPA is designed to automate repetitive, rules-based tasks - like transferring data between spreadsheets. In contrast, AI handles tasks requiring judgement or pattern recognition. RPA helps reduce operational costs, while AI drives innovation and supports core intellectual property.

Think of AI as an augmenter rather than a replacement. The best implementations treat AI as a "co-pilot" that enhances human capabilities instead of replacing human judgement. As Isabella Inari Cioffi from Deloitte Malta explains:

"AI literacy is not about becoming a data scientist or a programmer - it's about developing the ability to understand, question, and collaborate with AI tools intelligently".

Keep in mind, AI isn’t perfect. It can "hallucinate", reflect biases in its training data, or experience model drift (where accuracy declines over time). Always ensure human oversight, especially when using Generative AI tools.

Lastly, don’t succumb to the temptation to apply AI everywhere. Success comes from setting narrow, measurable goals with clear ROI. As Ahmad Karim, a Senior IT & AI Leader, puts it:

"The question is no longer whether you can afford to adopt AI - it's whether you can afford not to".

Focus on use cases that align directly with your business goals. It’s not about chasing trends - it’s about making smart, strategic choices.

Data Readiness and Infrastructure

Evaluating Data Quality and Governance

The success of any AI initiative hinges on the quality of your data. Before diving into algorithms or investing in platforms, it’s critical to assess whether your organisation’s data is ready to support AI workloads. Interestingly, nearly half of employers admit to delaying AI implementation due to data readiness issues.

A good starting point is conducting a simple audit. Ask yourself: can you trace the source of every number in your reports? Do you have a well-documented inventory of all enterprise data sources, described in terms that business users – not just IT professionals – can understand? Andrea Gigli, Chief Data, Digital & AI Officer at Autoguidovie, highlights this point:

"AI can tolerate imperfect data. It cannot tolerate silent ambiguity."

This underscores the importance of establishing a single source of truth for key business metrics. For instance, if different departments define "active customer" in varying ways, AI will only magnify that inconsistency. To avoid such pitfalls, implementing automated data quality checks during the ingestion process is crucial. Waiting for issues to emerge later can derail projects. Effective governance isn’t about creating red tape; it’s about building a framework that ensures reliability. This includes assigning clear data ownership, enforcing access permissions, and documenting business logic in straightforward, accessible language.

A readiness checklist can help you identify hidden risks before they become major obstacles. Here’s how to interpret your results:

Readiness Score (Yes/No) | Interpretation | Recommended Action |

|---|---|---|

20–24 "Yes" | Solid Foundations | Move forward confidently; focus on maintaining standards over time. |

15–19 "Yes" | Usable Base | Suitable for targeted projects, but avoid scaling prematurely. |

10–14 "Yes" | Substantial Risks | Results may be unreliable; manual oversight will likely be needed. |

< 10 "Yes" | Fragile Foundations | Investments in AI are premature; prioritise building a structured foundation. |

Source: Andrea Gigli, Data Readiness Checklist

Once governance is in place, the next step is to ensure your infrastructure can meet AI’s demanding requirements.

Infrastructure Requirements for AI

With verified, high-quality data as a foundation, your infrastructure must be equipped to handle the heavy computational and storage demands of AI. High-performance computing, particularly GPUs, is essential for training AI models. Scalable storage solutions are equally important to manage the large datasets AI requires. To maintain a steady flow of clean data, robust data pipelines and automated ETL processes are non-negotiable. Using standard protocols like REST APIs can also streamline communication between platforms.

Network bandwidth is another critical factor. High bandwidth prevents bottlenecks during large data transfers, while encryption and strict access controls safeguard sensitive AI endpoints. Tools like Docker and Kubernetes are invaluable for managing containerised AI workloads, enabling seamless autoscaling to match demand.

Although over 75% of companies have adopted AI in at least one area of their business, fewer than 20% have the essential practices in place to scale these efforts effectively. A 2025 study revealed that 95% of enterprise generative AI initiatives failed to show measurable financial impact, largely due to weak integration with existing workflows.

Before deployment, conduct a comprehensive audit of your compute resources, storage capacity, and network bandwidth. Prioritise data quality and infrastructure readiness over flashy new tools. Finally, ensure your team includes not only data scientists but also engineers skilled in cloud architecture and data governance. A balanced team is key to turning AI ambitions into measurable results.

Ethics, Risk Management, and AI Governance

Once you've built strong data and infrastructure foundations, the next step is tackling the ethical and governance challenges essential for implementing AI safely and at scale.

Identifying and Managing Ethical Risks

AI systems, if not carefully managed, can reinforce biases, compromise data, or even cause harm. Addressing these risks isn't just a technical issue - it’s a leadership responsibility. As the Information Commissioner’s Office (ICO) puts it:

"You cannot delegate these issues to data scientists or engineering teams. Your senior management, including DPOs, are also accountable for understanding and addressing them appropriately and promptly."

Bias in AI often stems from two key sources: statistical issues (like sampling or measurement errors) and societal factors (such as historical inequalities embedded in data). For instance, if a recruitment algorithm factors in engineering degrees, it might inadvertently perpetuate gender imbalances, reflecting the male-dominated history of the field.

Transparency is crucial. Let users know when AI is involved in decision-making and explain outcomes in straightforward, non-technical language [43,44]. A layered explanation approach works well - provide essential details upfront and offer more technical insights for auditors or those seeking deeper understanding. Assign a Senior Responsible Owner (SRO) to serve as the point of contact for users who wish to challenge AI-driven decisions.

To comply with UK GDPR, conduct Data Protection Impact Assessments (DPIAs) whenever AI processes data that could affect individual rights. Treat DPIAs as living documents, updating them regularly, and perform bias audits, especially in high-stakes areas like hiring or lending. If a personal data breach occurs, notify the ICO within 72 hours.

Put safety measures in place, such as content filters, rate limits, and emergency shutdown options, to prevent misuse [42,44]. Additionally, optimise your model size and infrastructure to reduce the environmental footprint of AI systems [42,44].

Creating AI Governance Frameworks

Identifying risks is only the beginning - turning ethical principles into actionable controls requires a strong governance framework.

Governance acts as the backbone for scaling AI safely. Forward-thinking organisations see governance as an enabler of growth rather than a roadblock.

Start by compiling an inventory of all AI systems, documenting their technical details, risk assessments, and data sources. Use a structured scoring model, like the AI Governance Exposure Index (AGEI), to classify systems by risk. This model evaluates factors such as system impact (35%), model autonomy (25%), regulatory sensitivity (20%), and monitoring maturity (20%). High-risk systems demand closer oversight, while lower-risk tools can operate with more flexibility.

Draft a clear AI policy that outlines roles, responsibilities, and guidelines for appropriate use. This policy should integrate seamlessly with existing frameworks for Enterprise Risk Management (ERM), internal audits, cybersecurity, and legal compliance - AI governance shouldn’t operate in isolation. With the UK Data (Use and Access) Act set to take effect on 19 June 2025, staying ahead of regulations is essential [43,46]. This proactive approach not only ensures compliance but also strengthens trust and accountability across your operations.

Real-time monitoring is another critical element. Use continuous telemetry to identify issues like model drift, bias, or performance degradation. Conduct regular audit drills and mock regulatory inspections to ensure decision logs are complete and accessible when needed. For AI as a Service (AIaaS), demand detailed documentation on training data sources and perform thorough checks to avoid inheriting biases.

Finally, set up confidential reporting channels where employees and external users can report AI failures or harmful impacts without fear of retaliation. Responding within 72 hours, in line with GDPR breach notification rules, demonstrates a commitment to accountability and transparency.

Building Team Capability and Leadership Skills

Once your governance frameworks are in place, the next step is ensuring your team is equipped to execute your AI strategy effectively. This isn't just about hiring data scientists; it's about fostering a broader understanding of AI across your organisation and knowing when to bring in external expertise.

Assessing Team AI Skills and Knowledge Gaps

Before diving into training or recruitment, it’s important to assess where your organisation currently stands. Using AI tools is one thing, but building the organisational capability to scale AI - including governance, culture, and skills - is a different challenge altogether. As Sarah Daly, author of the AI360 Review, explains:

"AI capability enables AI adoption to succeed".

Start by evaluating your organisation's readiness across six critical areas: Strategy, Data, Technology, People, Process, and Culture. A structured skills inventory can help identify gaps and avoid stalled pilot projects. For instance, Microsoft's AI Readiness Assessment, which takes about 45 minutes to complete, evaluates preparedness across seven pillars.

The SFIA Foundation highlights that:

"senior roles (SFIA 6-7) may need 'Working' or 'Practitioner' literacy rather than 'Expert' if their focus is strategic rather than technical".

To tailor training effectively, categorise your team into four levels of AI literacy - Awareness, Working, Practitioner, and Expert. Here’s how these categories break down:

AI Literacy Level | Key Focus | Development Approach |

|---|---|---|

Awareness | Basic understanding of AI and its applications | Introductory workshops, general awareness sessions |

Working | Regular effective use and configuration of AI tools | Tool-specific training, guided implementation |

Practitioner | Selection and implementation of AI solutions | Advanced workshops, practical projects |

Expert | Technical creation, customisation, and innovation | Specialised technical training, R&D activities |

Cross-functional fluency between technical and business teams is another key indicator of readiness. Once you’ve identified gaps, you can create a targeted roadmap to guide your digital transformation efforts.

Planning for Digital Transformation

With a clear understanding of your organisation’s gaps, the next step is to develop a phased roadmap that aligns AI integration with measurable business outcomes. While 90% of executives believe AI has the potential to drive revenue growth, only 1% report that AI is fully integrated into their workflows. The challenge often lies in execution rather than ambition.

Leadership plays a critical role here. Executives must actively engage with AI tools to model adoption and drive cultural change. For example, in early 2024, Box CEO Aaron Levie announced a shift to an "AI-first" model. To support this transition, the company introduced "Friday lunches" as open forums for employees to share AI use cases and best practices. Levie emphasised the importance of eliminating repetitive tasks to focus on customer-facing work while ensuring employees remained accountable for AI-generated outputs.

Tailor learning paths to specific roles rather than using generic training programmes. A mid-sized law firm, for instance, implemented several AI tools without proper communication or training. Employees didn’t understand how the tools applied to their workflows, and the investment ultimately failed. This highlights a common issue: 47% of employees using AI report not knowing how to achieve the productivity gains their employers expect.

To start, use a 30–90 day action plan. Begin by establishing baseline metrics, such as time spent on tasks or error rates. Identify two or three use cases and document associated workflows in natural language. Run AI tools in "shadow mode" with human oversight before transitioning to autonomous execution for low-risk tasks. Create feedback loops where employees can share experiences and raise concerns. Organisations with high-trust cultures are twice as likely to succeed in adopting AI tools.

Using External Support for AI Projects

When internal expertise falls short, external support can be a valuable resource. External specialists can help bridge gaps in roles like machine learning engineers, data experts, or AI specialists. They can also accelerate progress by turning ambitious plans into actionable steps while avoiding disconnected experiments.

Pilot collaborations are a good way to test technical expertise, project management skills, and overall fit. Set clear goals with measurable KPIs - such as reducing cycle times or error rates - to track success. Ensure that external partners provide knowledge transfer through training programmes, minimising long-term reliance on outside help.

For leadership-focused solutions, platforms like AgentimiseAI offer tailored advice for executives. Their GuidanceAI platform connects leadership teams with expert-level insights without requiring full-time senior hires. These virtual C-suite advisors, trained by experienced business professionals, provide guidance tailored to the unique workflows of founder-led SMEs.

As Susan Youngblood, an AI and Human Capital Expert, points out:

"CEOs lead the AI transformation by setting a clear roadmap and objectives and fostering a company culture that embraces AI".

External expertise should complement your internal efforts, ensuring your organisation develops the skills needed to sustain AI adoption well into the future.

Conclusion: Next Steps for AI Literacy

To build on the checklist's insights, it's time to take actionable steps to improve your AI literacy. As Deloitte Malta puts it, "AI literacy is not about becoming a data scientist or a programmer – it's about developing the ability to understand, question, and collaborate with AI tools intelligently". While 90% of executives recognise AI's potential for revenue growth, only 1% have fully integrated it into their workflows. The key is to act now, rather than waiting for the "perfect" moment. These steps align with the broader checklist, addressing strategy, data, ethics, and team skills to ensure a well-rounded approach to adopting AI.

Start by experimenting with AI tools yourself before introducing them to your team. This hands-on approach not only builds credibility but also helps you understand the tools' capabilities and limitations. Prioritise high-value use cases - tasks that are repetitive, high-volume, or prone to errors - where AI can clearly deliver results. Be sure to document your current performance metrics, such as cycle times, error rates, and labour hours, so you can measure the impact effectively.

In the first 30 days, identify 2–3 specific use cases, establish baseline metrics, and assign ownership to key team members. Over the next 30–60 days, implement AI tools in "shadow mode", where they operate under human supervision. As Isabella Inari Cioffi from Deloitte Malta notes:

"The future belongs not to those replaced by AI, but to those who know how to harness it effectively".

Transparency is essential for building trust. Be open about your AI objectives and create feedback channels where employees can share their suggestions. With 78% of knowledge workers already using unauthorised AI tools, your role is to guide this enthusiasm into productive and approved uses.

If your internal expertise is lacking, consider external support. Platforms like AgentimiseAI offer leadership-focused guidance, connecting teams with virtual C-suite advisors trained by seasoned business professionals. These advisors provide tailored advice, helping you strengthen your AI initiatives without the need for full-time senior hires. Combining external insights with internal efforts will help your organisation develop lasting AI capabilities and turn strategy into measurable results. By taking these steps, you can bridge the gap between theory and practice, ensuring AI delivers real business value.

FAQs

What’s the first AI use case I should pick for quick ROI?

Automating meeting summaries and action items is a great place to start. Tools such as Otter.ai, Fireflies.ai, and Microsoft Copilot can handle these tasks efficiently, saving time and improving productivity in as little as 30 days. By targeting use cases that eliminate repetitive tasks, you can see immediate results and free up resources for more strategic work.

How can I tell if our data is good enough for AI?

To determine if your data is ready for AI, focus on its relevance, accuracy, and whether it’s properly labelled to meet your business objectives. Quality data should reduce bias, remain consistent, and comply with privacy and accuracy standards. Regular testing for challenges like bias or data drift is essential, alongside maintaining governance through clear ownership and well-defined KPIs. Interestingly, smaller, well-labelled datasets can often deliver better results than large, poorly-prepared ones, especially when tailored to specific goals.

What AI risks can’t I delegate to IT?

AI-related risks that call for executive attention include ethical blind spots, accountability challenges, and regulatory compliance concerns. These issues extend beyond the technical expertise of IT teams, requiring strong governance and leadership to ensure AI is used responsibly.