AI Compliance Reporting: What CEOs Need to Know

16 Apr 2026

What CEOs need to know about UK and EU AI rules, reporting essentials, risks, tools and 2026 compliance deadlines.

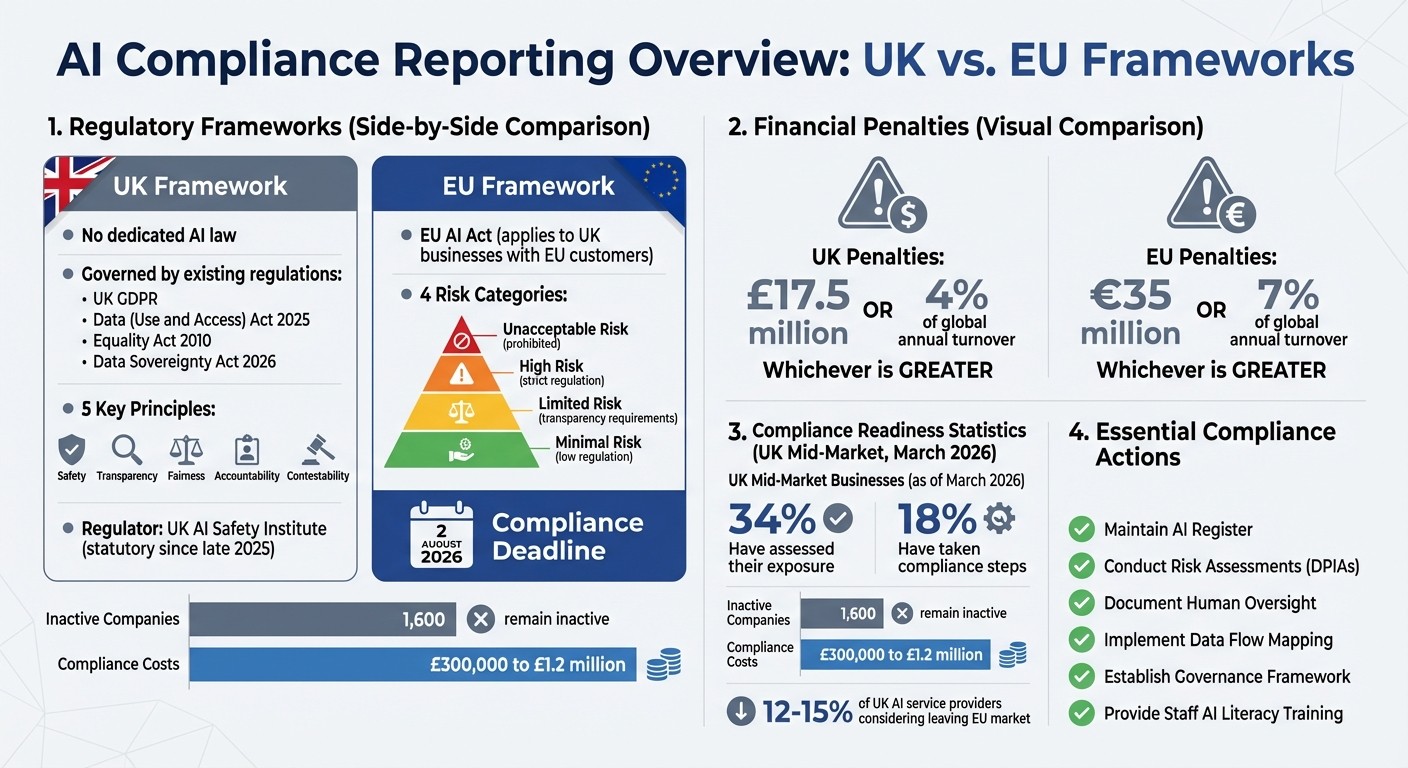

AI compliance reporting ensures your systems meet UK laws like UK GDPR, the Equality Act 2010, and consumer protection rules. While there’s no specific AI law in the UK, compliance is enforced by regulators such as the ICO and CMA using principles like safety, transparency, and accountability. Failing to comply can lead to fines up to £17.5M or 4% of global turnover, reputational damage, and operational risks.

Key points for CEOs:

UK Compliance: Governed by existing laws, including the Data (Use and Access) Act 2025, which updates GDPR rules.

EU AI Act: Applies to UK businesses interacting with EU customers, with a compliance deadline of 2 August 2026.

Risks: Non-compliance can result in penalties reaching 7% of global turnover under EU rules.

Reporting Essentials: Maintain an AI register, conduct risk assessments, and document human oversight processes.

To simplify compliance, tools like ReporticaAI and Klarvo can help manage reporting and oversight efficiently. Training and workshops are also available to guide non-technical teams through the process. Start preparing now to avoid penalties and ensure smooth operations.

UK vs EU AI Compliance: Key Regulations, Penalties, and Deadlines for 2026

UK Regulations for AI Compliance

The UK doesn’t have a dedicated law specifically for AI. Instead, compliance is managed through existing regulations and a principles-based approach. Regulators enforce AI compliance based on five key principles: safety, transparency, fairness, accountability, and contestability. The legal framework draws from laws like the UK GDPR, the Data (Use and Access) Act 2025, the Equality Act 2010, and the Data Sovereignty Act 2026. Notably, the latter defines personal AI data as "Private Cognitive Property", safeguarding it from warrantless access.

As Anju Kushwaha, Founder of Relishta, highlights:

The UK's stance has created a 'Third Way' between the US's laissez-faire approach and the EU's heavy-handed AI Act.

This approach balances innovation with robust safeguards, especially for high-risk AI applications. However, navigating this framework becomes more complex when dealing with EU regulations.

The EU AI Act and How It Applies to UK Businesses

Although UK compliance focuses on domestic laws, businesses operating internationally must also meet external standards. Brexit hasn’t shielded UK companies from the EU AI Act. If your business sells to EU customers, has EU-based subsidiaries, or processes data from EU residents, you’re within its scope. The Act’s "Brussels Effect" means it applies regardless of your company’s location. Peter Vogel, CEO of Helium42, explains:

UK organisations are subject to EU AI Act compliance not because they are headquartered in the EU, but because their customers or data subjects are.

The Act categorises AI systems into four risk levels:

Unacceptable Risk: Prohibited systems.

High Risk: Strictly regulated applications like recruitment tools or credit scoring.

Limited Risk: Systems with transparency requirements.

Minimal Risk: Low-regulation systems.

High-risk systems must undergo conformity assessments and be registered in an EU database. Non-compliance can result in fines of up to €35 million or 7% of global annual turnover, whichever is greater. Additionally, Article 4 requires staff deploying high-risk AI systems to undergo verifiable AI literacy training.

The compliance deadline is 2 August 2026. However, as of March 2026, only 34% of UK mid-market businesses have assessed their exposure, and just 18% have taken steps toward compliance. Around 1,600 UK mid-sized companies likely affected by the deadline remain inactive. Facing compliance costs ranging from £300,000 to £1.2 million, 12–15% of UK AI service providers are considering leaving the EU market altogether.

UK AI Safety Institute Guidance

The UK AI Safety Institute (UK AISI) became a statutory regulator in late 2025. Under the 2026 Data Sovereignty Act, high-risk systems in sectors like healthcare, finance, and energy must include a 72-hour "Offline-First" resilience mode to ensure functionality during cloud outages.

The Institute has introduced a tiered safety framework:

Tier 1: General-purpose open models must implement "Safety Weights" - localised filters designed to block harmful content generation.

Tier 2: High-stakes proprietary models (e.g., GPT-5 or Claude 4) must provide a "Zero-Knowledge Proof of Alignment" to confirm compliance with UK safety laws without disclosing proprietary code.

What to Include in Your AI Compliance Report

An AI compliance report is where you document the key decisions, controls, and oversight measures for your AI systems. The Information Commissioner's Office (ICO) requires clear evidence of risk-based decision-making. Your report should focus on three main areas: a detailed inventory of your AI systems, risk assessments for each system, and governance structures that ensure proper human oversight.

AI System Inventory and Documentation

Start by creating an AI Register. This is a full list of all the AI systems your organisation uses, including features integrated into tools like CRMs, accounting software, or HR platforms. For each system, include:

The system's name and its business purpose

The human supervisor responsible for oversight

The types of data the system processes

Additionally, you’ll need data flow mapping. This involves charting how data moves through each system, including its sources, how it’s processed, and the outputs it generates. Be sure to specify the lawful basis for each data processing activity - whether it's consent, legitimate interest, or a contractual requirement. This step shows you've considered principles like data minimisation and purpose limitation from the start.

Risk Assessments and How to Address Them

For high-risk AI uses, such as large-scale profiling or handling sensitive data, a Data Protection Impact Assessment (DPIA) is essential.

"DPIAs are a key part of data protection law's focus on accountability and data protection by design. They can effectively act as roadmaps for you to identify and control the risks to rights and freedoms that using AI can pose."

Your DPIA should identify two types of risks: allocative harms (e.g., decisions that affect access to jobs, goods, or services) and representational harms (e.g., stereotyping or denigration). Include technical details like accuracy rates, error margins, and how you monitor for concept drift - when an AI model’s performance declines due to changing real-world conditions. If your DPIA uncovers risks that cannot be adequately mitigated, you’re required to consult the ICO before proceeding.

Human Oversight and Governance Frameworks

Your report must prove that humans are actively involved in AI decision-making, not just signing off on outputs without review. Document the following:

Who reviews the AI outputs

Their authority to override decisions

Their understanding of the system's logic

Training records to show they’re equipped to oversee AI outcomes

"Human intervention should involve a review of the decision, which must be carried out by someone with the appropriate authority and capability to change that decision."

Clarify your governance structure, detailing who is accountable for AI-related decisions, how individuals can contest those decisions, and how complaints are handled. For complaints, ensure your report outlines a clear process: individuals must complain to you first, and you must acknowledge their complaint within 30 days. Having this mechanism in place is critical for compliance.

Formats and Tools for Compliance Reporting

Once you've gathered your compliance data, it's crucial to present it in a way that suits your audience - whether that's regulators, auditors, or internal teams.

Common Reporting Formats Explained

Data Protection Impact Assessments (DPIAs) are a legal requirement for high-risk AI under UK GDPR Article 35. These are especially necessary for activities like systematic profiling or large-scale automated decision-making. Typically, DPIAs are shared in structured document formats such as PDFs or DOCX files.

For technical audiences, Model Cards and Datasheets are key. They outline how machine learning models perform under different conditions and provide details about the training data. To ensure accessibility, it's often helpful to pair these detailed descriptions with a simplified summary for less technical stakeholders. This two-tier documentation approach - technical details for auditors and a high-level overview for others - can make your reporting more effective.

Argument-Based Assurance Cases are another option, particularly for documenting claims about safety or fairness. These cases organise evidence around specific claims, creating a clear audit trail. This structured format is especially useful for demonstrating compliance with standards. Here's a quick overview of how these formats align with UK regulations:

Format | Best For | UK Regulatory Alignment |

|---|---|---|

DPIA | High-risk AI deployments | Mandatory under UK GDPR Art 35 |

Assurance Case | Safety and fairness claims | Accountability Principle |

Model Cards | Technical transparency | Transparency & Explainability Guidance |

Privacy Notice | Public-facing transparency | UK GDPR Articles 12–14 |

Tools That Make Compliance Reporting Easier

Beyond choosing the right formats, there are tools available to simplify compliance reporting. For example, the ICO's AI and Data Protection Risk Toolkit is a free, Excel-based tool designed to help UK businesses manage risks related to individuals' rights. It's particularly useful for smaller organisations with limited resources. Similarly, the AI Management Essentials (AIME) tool from the Department for Science, Innovation and Technology uses an interactive decision-tree format to help SMEs assess governance needs.

For automated solutions, tools like ReporticaAI and aireporttool.eu can significantly cut down on documentation time. ReporticaAI generates reports aligned with regulations and ensures UK data residency, while aireporttool.eu can reduce the time needed for compiling reports from days to about 15 minutes.

If you're overseeing multiple AI systems, governance platforms such as Klarvo and VerifyWise are worth considering. These platforms offer dashboards for managing AI inventories, audit-ready evidence storage, and real-time compliance tracking. Klarvo provides a free plan for one AI system, while VerifyWise offers a free trial with demo-based pricing for enterprise features. These platforms can classify an AI system against regulatory frameworks in under a minute using just four data fields.

"The key objective is to provide good documentation that can be understood by people with varying levels of technical knowledge..." - Information Commissioner's Office

When selecting tools, ensure they support both PDF (for precise regulatory submissions) and DOCX (for internal editing and reviews). For industries handling sensitive data, prioritise tools that guarantee UK or EU data storage to comply with local data protection laws.

Next, learn how AgentimiseAI can enhance your compliance efforts with tailored tools and training.

How AgentimiseAI Supports Your Compliance Work

AgentimiseAI is here to simplify the often-daunting task of meeting AI compliance requirements, especially for UK-based CEOs, MDs, and operations leaders who may not have a technical background. Their specialised services are designed to bridge the gap and make compliance achievable.

AI Leadership Training for Non-Technical Teams

The AI Leadership Training sessions (£1,050 per session) focus on empowering non-technical teams to take charge of AI ethics and compliance. With 80% of executives agreeing that business leaders - not tech specialists - should oversee AI ethics, it's concerning that fewer than 25% have implemented actionable compliance workflows. These half-day, hands-on sessions guide participants through:

Mandatory human oversight essentials

Mitigating risks associated with Shadow AI

Integrating ethics into governance practices

The training also provides practical tools, including maintaining an AI register, drafting a concise internal usage policy, and assigning executive ownership. These steps are key for achieving immediate compliance.

AI Discovery Workshops for Compliance Planning

The AI Discovery Workshops (£1,050 per workshop) address a major challenge: over half of companies don’t have a basic inventory of their AI systems, making compliance nearly impossible. These workshops focus on execution by helping organisations:

Build a comprehensive inventory of AI systems

Classify systems by risk based on regulatory standards

Produce audit-ready documentation

Using an "Outcome First, AI Next" approach, the sessions identify compliant AI use cases with strong returns, establish escalation paths for human oversight, and create documentation for regulatory inspections. This planning is essential, especially with potential fines under the EU AI Act reaching up to €35 million or 7% of global annual turnover.

Custom AI Agent Development with Compliance Built In

AgentimiseAI’s Custom AI Agent Development (starting at £1,900) ensures compliance is embedded from the ground up. These bespoke agents are designed to meet strict regulatory standards, including the Data (Use and Access) Act 2025. Key features include:

Built-in data protection and model validation

Tamper-evident audit trails with machine-readable records

Full data sovereignty by processing information in-house

The agents are tailored to integrate seamlessly with your existing systems while adhering to both UK and EU regulations. For added value, you can bundle the Training and Workshop for £1,800 (a 15% saving) and receive a free AI policy template. This bundle offers a practical starting point for turning compliance into an active, ongoing governance process.

These services are designed to fit neatly into your broader compliance strategy, ensuring you’re prepared for the challenges of AI governance.

Conclusion

AI compliance reporting has shifted from being a choice to a mandatory legal requirement. The ICO's first AI-specific enforcement action in 2024 marked the beginning of stricter oversight. With the Data (Use and Access) Act 2025 now in effect and the EU AI Act's high-risk obligations set to take hold on 2 August 2026, the regulatory framework is evolving quickly. Under UK GDPR, all AI systems fall under scrutiny, with non-compliance risking fines of up to £17.5 million or 4% of annual turnover. Postponing compliance efforts could leave businesses exposed to significant penalties.

The financial risks of inaction are high. Investing in workforce upskilling is 5–8 times more cost-effective than hiring senior AI specialists. Acting early not only reduces costs but also avoids last-minute fixes and reliance on expensive consultants. Experts agree that UK organisations already face compliance challenges under existing laws, making proactive measures essential.

To bridge the gap, AgentimiseAI offers practical solutions tailored for non-technical leaders. Their AI Leadership Training (£1,050) and AI Discovery Workshops (£1,050) are designed to address the execution challenges faced by 84% of organisations unable to pass agent compliance audits. For more comprehensive support, the Custom AI Agent Development service (starting at £1,900) integrates compliance features like audit trails, data sovereignty, and human oversight directly into your systems. Plus, the Training and Workshop can be bundled for £1,800, offering a 15% discount and including a free AI policy template to kickstart your compliance framework.

FAQs

Does the EU AI Act apply to us?

Yes, businesses in the UK that develop, deploy, or use AI systems involving customers or data from the EU must comply with the EU AI Act. This is because the Act has an extraterritorial scope, meaning its regulations apply not just within the EU but also to organisations outside the EU that interact with EU markets or process EU data.

What must be in an AI compliance report?

An AI compliance report needs to outline how AI systems manage personal data while adhering to UK GDPR principles such as transparency, fairness, and accountability. It should also include detailed risk assessments, like Data Protection Impact Assessments (DPIAs), to identify and address potential privacy risks. Additionally, the report must showcase evidence of continuous monitoring and governance practices to ensure all UK legal obligations are met.

How can I prove human oversight works?

To demonstrate that human oversight is effective, it must meet three key criteria: it should be meaningful, technically enforceable within the system’s design, and proportionate to the level of risk posed by the AI system. This isn’t about ticking boxes with basic reviews or approvals. Instead, there needs to be clear evidence that oversight mechanisms are actively built into the system and function effectively in real-world scenarios.