AI Bias: Problem and Solutions for SMEs

5 Mar 2026

Unchecked AI bias threatens SME decisions, reputation and legal compliance—practical governance, audits and training can prevent costly harm.

AI bias is a critical issue for SMEs, leading to skewed decisions that can harm business, ethics, and compliance. This happens when flawed data or algorithms produce unfair outcomes, especially in areas like hiring, customer service, and financial decisions. SMEs are particularly vulnerable due to limited resources and reliance on off-the-shelf AI tools. Legal frameworks like the Equality Act 2010 and the Data (Use and Access) Act 2025 make addressing bias a legal obligation, not just a moral one.

Key Takeaways:

Risks of AI Bias: Recruitment tools favouring specific demographics, customer segmentation excluding certain groups, and strategic decisions based on flawed insights.

Legal and Financial Impact: Bias incidents can lead to fines (up to £6.5 million), reputational harm, and increased costs from customer churn and talent loss.

Solutions for SMEs:

Assign an "AI owner" to oversee tools and decisions.

Regularly audit AI systems for biased outputs.

Provide leadership training to improve AI literacy.

Use tools like the AI Management Essentials (AIME) self-assessment for compliance.

By implementing governance, audits, and training, SMEs can reduce bias risks, protect their reputation, and meet legal requirements.

What AI Bias Looks Like in Boardroom Systems

AI bias in boardroom decision-making can have a direct impact on recruitment, customer engagement, and strategic planning. When leadership teams rely on AI tools without fully understanding how they function, the resulting outputs can misguide major decisions. For example, recruitment systems might favour candidates from specific demographics, while customer segmentation tools could unintentionally exclude certain groups based on factors like postcodes or employment status. In strategic planning, AI systems trained on synthetic (AI-generated) data risk "model collapse", where critical errors and lack of nuance degrade the quality of insights provided to boardrooms.

Matthew Cole, Partner at Prettys Solicitors LLP, highlights the root of the issue:

"AI models learn from data. If the training data reflects biased decisions, incomplete records, or historical inequalities then the system will replicate and even amplify those biases".

A 2024 study from the University of Washington revealed that résumé screening tools powered by AI showed a preference for white-associated names in 85% of cases. Even when sensitive information like ethnicity or gender is excluded, AI can infer these details through indirect variables - such as job roles, part-time work status, or residential areas. This leads to what regulators refer to as "fairness through unawareness" failures.

These challenges prompt a deeper look at the origins of AI bias and the unique risks it poses to SME boardrooms.

Where AI Bias Comes From

AI bias often originates from flaws in the training data or the way systems are deployed. For instance, if historical data reflects a predominantly male hiring trend, the AI may prioritise similar profiles when making future recommendations. Sampling bias occurs when the training data doesn't accurately represent the broader population, while measurement bias stems from errors in how data is labelled or collected. For example, credit risk models may use flawed proxies that unfairly assess someone's reliability. Deployment bias arises when AI tools designed for one market, such as the US, are applied in another, like the UK, without proper adjustments. Additionally, data poisoning - where bad actors deliberately insert misleading information into public datasets - can reduce the performance of AI fraud detection tools by over 20%.

These technical vulnerabilities are particularly concerning for SMEs, as explored below.

Why SMEs Face Greater Risk

Small and medium-sized enterprises (SMEs) are more exposed to the risks of AI bias due to limited resources for addressing these technical challenges. Unlike larger corporations, which can afford custom AI solutions and thorough fairness audits, SMEs often rely on off-the-shelf tools. These commercially available systems are usually designed for larger enterprises and may not align with the specific needs of smaller businesses. Moreover, SMEs typically have smaller datasets, which increases the likelihood of sampling bias. When certain groups or scenarios are underrepresented, AI models can behave unpredictably.

Kate Motonaga, CFO and Enterprise Risk Management Expert, offers a stark reminder:

"The assumption that purchased tools or vendor platforms are fully reliable is no longer defensible. Directors must ensure leadership is asking the right questions".

Without transparency from vendors about how their models are trained and maintained, SME leaders often struggle to detect when AI systems are making biased decisions that could harm both operations and consumers.

Business and Ethical Consequences of AI Bias in SMEs

AI Bias Impact on SMEs: Financial Costs and Business Consequences

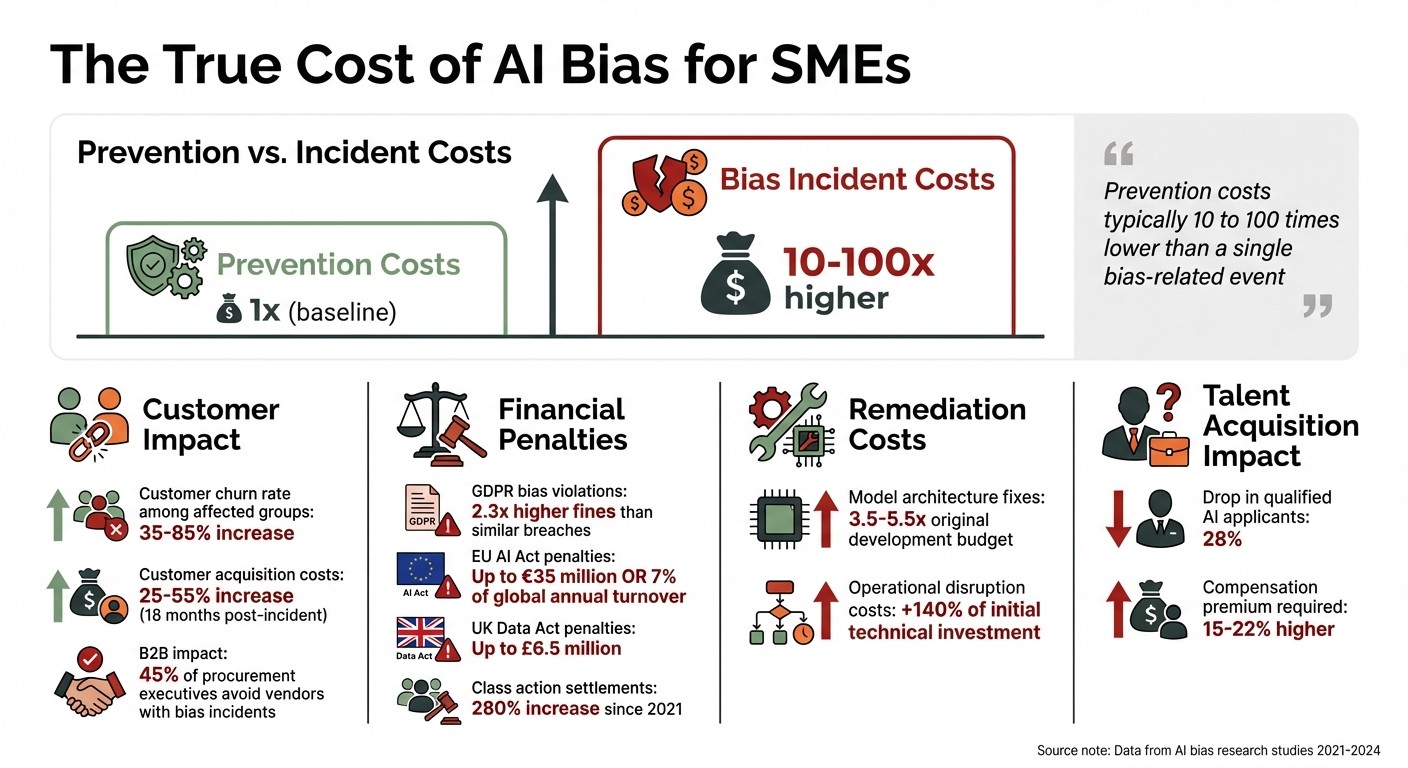

Unchecked AI bias isn't just a technical flaw - it’s a ticking time bomb for both business performance and ethical standing. For SMEs, the stakes are high. A single incident can lead to immediate financial setbacks and long-term erosion of trust among stakeholders. What’s more, preventing bias is far cheaper than dealing with its fallout. In fact, prevention costs are typically 10 to 100 times lower than the financial impact of a single bias-related event, which can wipe out hundreds of millions in market value.

O. Ivchenko, an AI researcher at Odessa National Polytechnic University, highlights this disparity:

"Prevention costs are tangible, immediate, and easily quantifiable... The costs of bias incidents, however, remain abstract until they materialise, at which point they typically exceed prevention costs by one to two orders of magnitude".

Beyond the numbers, ethical lapses also undermine the integrity of decision-making within organisations.

Reputation and Financial Losses

When AI bias affects business decisions, the consequences ripple through every layer of an SME. Not only does it tarnish leadership’s credibility, but it also damages the bottom line. Biased outcomes lead to reputational harm, which in turn drives away customers. Among affected demographic groups, customer churn rates jump by 35–85%, even if individuals within those groups weren’t directly impacted. And that’s not all - brand damage makes it harder to attract new customers, with acquisition costs rising by 25–55% in the 18 months following a bias incident.

The fallout isn’t limited to consumer-facing relationships. In the B2B space, 45% of procurement executives say they would avoid vendors linked to AI bias incidents. This makes ethical AI practices a non-negotiable factor for maintaining business partnerships .

Financial penalties add another layer of risk. Bias-related violations of GDPR result in fines that are, on average, 2.3 times higher than other breaches of similar severity. Under the EU AI Act, penalties for prohibited practices, such as discriminatory social scoring, can reach €35 million or 7% of global annual turnover. Meanwhile, class action settlements for AI bias have surged 280% since 2021.

Fixing these issues isn’t cheap either. Addressing bias embedded in model architecture can cost 3.5 to 5.5 times the original development budget. Operational disruptions during remediation efforts often add another 140% of the initial technical investment to the bill. Bias incidents also make it harder to attract top talent. SMEs with a history of bias see a 28% drop in qualified AI applicants and must offer 15–22% higher compensation to secure equivalent talent.

And these are just the business costs. The legal and regulatory landscape presents its own set of challenges.

Legal and Regulatory Compliance Issues

For SMEs in the UK, navigating the legal landscape isn’t straightforward. Two key frameworks - the Equality Act 2010 and UK GDPR - govern AI systems, but compliance with one doesn’t guarantee compliance with the other. As the Information Commissioner’s Office (ICO) explains:

"Demonstrating that an AI system is not unlawfully discriminatory under the EA2010 is a complex task, but it is separate and additional to your obligations relating to discrimination under data protection law".

Under UK GDPR, personal data processing must be "fair." Any AI system that results in unjust discrimination breaches this principle, even if the bias wasn’t intentional. The upcoming Data (Use and Access) Act 2025, which will take full effect by June 2026, introduces stricter rules for automated decision-making. SMEs will need to implement fairness safeguards and provide individuals with the right to challenge automated decisions.

Bias incidents can trigger enforcement under multiple frameworks simultaneously, including the EU AI Act, GDPR, and national consumer protection laws. For SMEs operating in or selling to the EU, systems used in recruitment, credit scoring, or healthcare are considered "high-risk." These systems require formal risk assessments, detailed logging, and human oversight.

Even testing models with sensitive data can lead to legal trouble. Under UK GDPR, SMEs must meet specific Article 9 conditions to process such data for bias mitigation. Providing inaccurate or misleading information to regulators via AI systems can result in fines of up to €7.5 million (£6.5 million).

The risks don’t stop at fines. Directors face personal liability for non-compliance. A growing trend is derivative shareholder litigation, where corporate boards are sued for breaching fiduciary duties by failing to manage AI risks. SMEs can’t afford to ignore these legal complexities - doing so could jeopardise not just their finances but their very existence.

How SMEs Can Reduce AI Bias

Tackling AI bias is a challenge, but it's not just for big corporations with endless resources. Small and medium-sized enterprises (SMEs) can take practical steps to address this issue without needing hefty budgets or dedicated AI teams. The key lies in setting up simple frameworks and making bias prevention part of routine operations.

Creating AI Governance Structures

For SMEs, governance doesn’t have to be overly complex. Start by assigning a specific individual - an "AI owner" - to be responsible for each AI tool your business uses. This person doesn’t need to be a tech expert, but they should have the authority to make decisions, coordinate reviews, and escalate any concerns.

Next, create a "One-Pager" for each AI system. This document should clearly state the tool’s purpose, the data it relies on, who oversees it, acceptable outcomes, and a "red button protocol" for reporting biased or inappropriate outputs. As the AIForSMEs Guide explains:

"If you can't explain how an AI tool is governed on one page, you don't yet have control over it".

Rather than developing a separate process, integrate AI risks into your existing organisational risk register. This approach keeps everything streamlined and ensures AI risks are reviewed alongside other business risks. Review cycles can be tailored based on risk levels: annual reviews for low-risk systems and quarterly checks for high-risk ones like those used in hiring or financial decisions.

A great example comes from Peterborough City Council, which introduced the "Hey Geraldine" AI chatbot in 2024 to assist social care staff. They established clear escalation steps, improved data protection for sensitive user information, and required quarterly risk updates for senior leadership.

Once governance is in place, the focus shifts to ongoing audits.

Running Bias Audits and Reviews

AI systems need regular check-ups - they’re not a "set it and forget it" tool. Over time, their accuracy and fairness can decline as real-world data changes. To counter this, systematic and frequent testing of AI outputs is crucial.

Bias audits should target three key areas: pre-processing (making sure training data is representative), in-processing (adding fairness measures during model training), and post-processing (adjusting outputs to fix imbalances). Before launching any AI tool, carry out an Equality Impact Assessment to assess its effects on protected groups under the Equality Act 2010.

Pay close attention to datasets for proxy variables - data points like postcodes or job titles that can unintentionally mirror discriminatory patterns. Combine this with a human-in-the-loop approach, where a person reviews AI outputs before they influence critical decisions. Research indicates that 31% of ethical failures in AI stem from biased outputs that harm certain groups unfairly.

For guidance, SMEs can use the UK Government's AI Management Essentials (AIME) self-assessment tool. It’s free and helps businesses align their processes with global standards like ISO/IEC 42001 and the NIST Risk Management framework.

Building AI Knowledge in Leadership Teams

Given the ethical and legal stakes, leadership teams must have a basic understanding of AI bias. They don’t need to become experts but should know enough to ask the right questions and recognise potential issues. Without this knowledge, providing oversight or making informed decisions becomes impossible.

Training should focus on recognising bias and understanding escalation protocols. Leaders need to grasp how bias can creep into systems, identify risky proxy variables, and know when human oversight is essential. Familiarity with legal frameworks like UK GDPR, the Equality Act 2010, and the upcoming Data (Use and Access) Act 2025 is also critical.

For a typical SME with 50 employees, dedicating around 20% of a senior manager’s time to AI governance is often sufficient. Smaller businesses may only need about two hours a month. While the time commitment is minimal, the safeguards it provides are invaluable.

Training platforms like AgentimiseAI are designed specifically for SMEs, offering leadership teams practical knowledge to manage AI responsibly. These platforms also provide advisory support tailored to each organisation’s workflows, making it easier to integrate AI into daily operations without compromising on ethics or compliance.

Maintaining Bias Prevention as AI Use Expands

As SMEs grow and integrate AI into areas like customer service and financial forecasting, the challenge of preventing bias becomes an ongoing responsibility. AI systems, even those designed to be fair at the outset, can start producing skewed or harmful outcomes as data patterns shift. This means bias prevention must be woven into every stage of the AI lifecycle.

Building Bias Prevention into AI Growth Plans

While regular bias audits are a good starting point, long-term strategies need to evolve alongside the technology. A useful framework for this is the Five Pillars of Ethical AI:

Fairness: Ensuring AI outcomes are equitable across different groups.

Accountability: Assigning clear responsibility to individuals or teams.

Transparency: Clearly documenting how AI systems operate.

Safety: Testing for edge cases to minimise risks.

Sustainability: Keeping an eye on model drift and adapting as needed.

These pillars help businesses maintain ethical AI practices as they scale.

Another essential approach is the Trustworthy AI Cycle, which focuses on defining the AI's purpose, ensuring high-quality data, setting ethical standards, rigorous testing, and ongoing monitoring. This cycle isn’t a one-off checklist - it's a continuous loop that evolves with the business.

For high-stakes decisions like hiring or lending, having a human-in-the-loop system is critical. This ensures that biased or incorrect AI recommendations are caught and corrected before causing harm. Additionally, an AI Ethics Board, comprising legal, technical, and business experts, can oversee high-impact deployments and ensure accountability. With the UK's Data (Use and Access) Act 2025 (effective since 19th June 2025) and the EU AI Act enforcing stricter rules for high-risk AI systems, SMEs must treat bias audits as a regulatory requirement, not just a best practice.

Using Customised AI Solutions for SMEs

Generic AI tools often fall short when it comes to addressing the unique needs of SMEs, especially in embedding bias prevention. Customised AI platforms offer a better alternative, allowing businesses to adopt a fairness-by-design approach. This means integrating bias mitigation as a measurable quality metric right from the development phase through to deployment. These platforms also enable continuous monitoring to detect model drift as new data is introduced.

For example, platforms like AgentimiseAI cater specifically to SMEs by offering tailored AI agents and leadership training. These tools provide both strategic advice and operational support, ensuring fairness scoring becomes a mandatory checkpoint before any new AI module is deployed. Another practical solution is synthetic data generation, which can help fill gaps in training data while protecting user privacy.

Conclusion: Managing AI Bias for Ethical Growth

The issue of AI bias calls for strong and proactive leadership. Left unchecked, it can erode trust, harm a company’s reputation, and undermine long-term business goals. For SME leadership, tackling bias head-on means building systems that promote fair decision-making, rather than reinforcing hidden prejudices. By integrating bias prevention into AI governance now, SMEs can avoid the financial and reputational risks that come with ethical missteps.

With the UK's Data (Use and Access) Act 2025 set to be fully enforced by June 2026, fairness in AI is no longer optional - it’s a legal requirement. This shift highlights the importance of tailored, efficient solutions. Custom AI platforms, like those from AgentimiseAI, offer a practical approach. These tools are built around fairness-by-design principles, include bias detection mechanisms, and provide leadership training to help non-technical teams identify potential bias. Priced at just £50–£200 per user annually, AI-powered training also boasts higher skill retention rates (40–60%) and greater scalability compared to traditional training methods.

FAQs

How can we spot AI bias if sensitive data is removed?

Detecting AI bias without relying on sensitive data is achievable by leveraging alternative indicators and fairness-focused techniques. Bias often stems from factors like non-diverse training datasets or specific design decisions. To address this, fairness assessments can evaluate disparate impacts across different groups, even when explicit attributes aren't available.

Technical tools, such as fairness algorithms or comprehensive bias audits, can uncover patterns of unfairness within the system. Additionally, regular monitoring plays a key role in continuously identifying and addressing potential bias as it arises. This proactive approach helps maintain fairness and accountability in AI systems.

What evidence should we ask vendors for to prove fairness?

When evaluating AI vendors, it's crucial to request evidence of how they tackle bias and discrimination in their systems. Ask for documentation that includes:

Results from bias testing

Detailed fairness assessments

Validation processes used to mitigate bias

Additionally, vendors should demonstrate ongoing monitoring efforts to identify and address any bias that may arise over time. This ensures their systems comply with UK data protection laws and anti-discrimination standards. By doing so, you can promote greater transparency and hold vendors accountable for their claims regarding fairness.

Which AI systems should SMEs audit first?

SMEs should begin by thoroughly examining their current processes and assessing their readiness for AI adoption. Start by identifying areas where AI could deliver the most impact, such as saving time or reducing costs. Key systems to evaluate include predictive analytics, automation, and decision-support tools. It's also important to check for potential biases or risks in these systems before rolling out AI solutions. This approach helps create a reliable and responsible starting point for integrating AI into your business operations.