Checklist for SME AI Data Infrastructure Readiness

7 Apr 2026

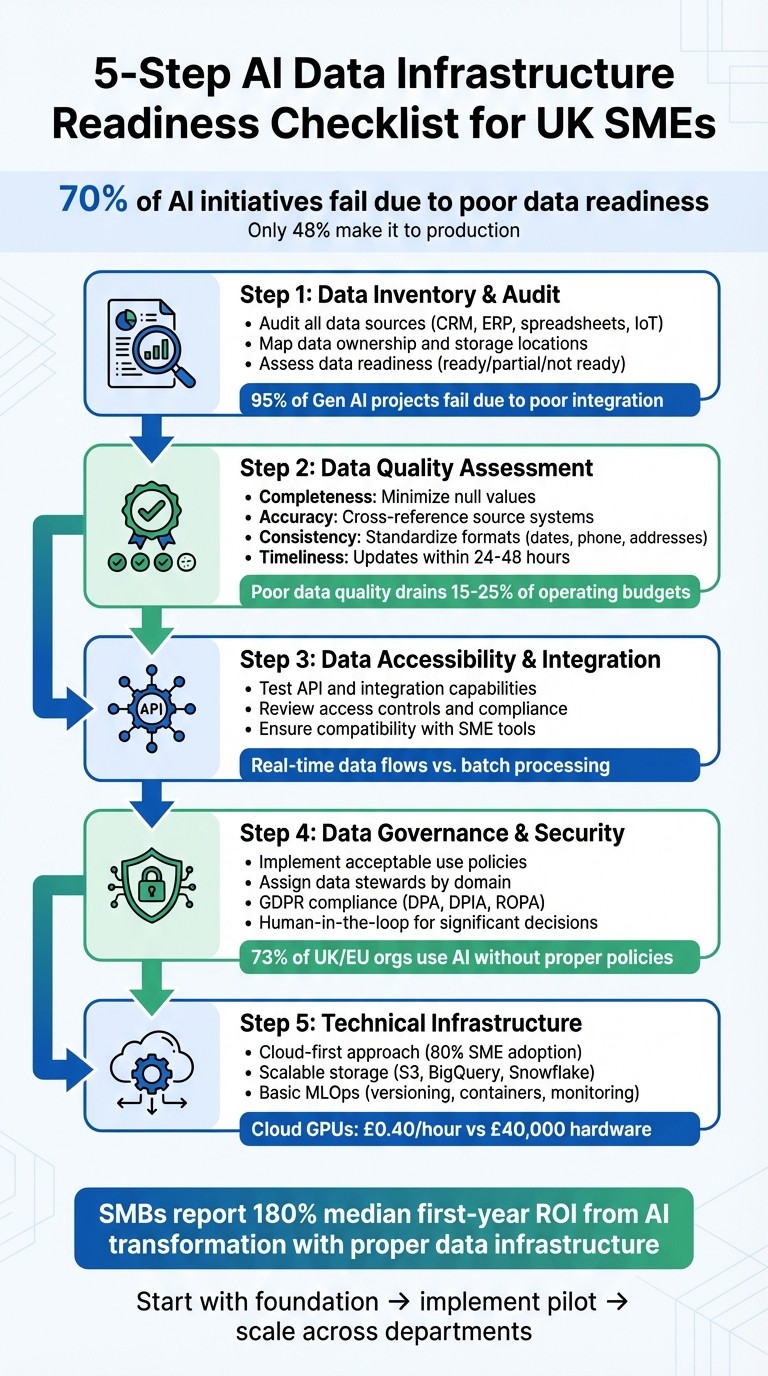

Step-by-step checklist for UK SMEs to audit data, improve quality, secure integrations and prepare cloud-ready AI infrastructure.

AI is only as good as the data it uses. For UK SMEs, diving into AI without assessing data infrastructure can lead to costly mistakes, compliance risks, and stalled projects. Here’s the reality: 70% of AI initiatives fail due to poor data readiness, and only 48% make it to production.

To avoid these pitfalls, SMEs need to focus on:

Data Inventory: Audit all data sources, from CRMs and spreadsheets to IoT devices.

Data Quality: Ensure accuracy, consistency, completeness, and timeliness to avoid unreliable AI outputs.

Integration: Test system compatibility (e.g., APIs, real-time data flows) and address silos.

Governance: Assign data stewards, enforce compliance (GDPR, UK laws), and set clear policies.

Infrastructure: Use cloud-first solutions, scalable storage, and basic MLOps practices for efficiency.

5-Step AI Data Infrastructure Readiness Checklist for UK SMEs

1. Data Inventory and Audit

Before diving into AI deployment, it’s crucial to take stock of all available data. Many UK SMEs often overlook the breadth and depth of their data sources. For instance, customer relationship management (CRM) systems like Salesforce or HubSpot often sit alongside financial data housed in ERP platforms. Meanwhile, operational data might be scattered across spreadsheets, email logs, and cloud storage. In sectors like logistics and retail, IoT sensor streams and telemetry add even more complexity. Industry-specific data sources also play a role: healthcare SMEs depend on Electronic Health Records (EHR), financial firms manage AML and KYC records, and estate agencies draw from Multiple Listing Service (MLS) platforms.

Here’s a quick look at common data sources and their AI applications:

Data Source Category | Typical SME Examples | AI Use Case |

|---|---|---|

Customer Data | CRM (Salesforce, HubSpot), Email logs | Lead scoring, churn prediction |

Financial Data | ERP systems, Invoices, Payroll | Cash flow forecasting, fraud detection |

Operational Data | Supply chain logs, Inventory spreadsheets | Demand forecasting, route optimisation |

Machine/IoT Data | Warehouse sensors, Telemetry | Predictive maintenance, real-time tracking |

External Data | Market analytics, MLS, Social media | Property valuation, sentiment analysis |

Use this table as a guide to identify your data sources and their potential AI applications. The first step in your audit is to list every system that contributes data.

1.1 Identifying Your Data Sources

Begin by creating a comprehensive list of systems that generate or store data. This includes obvious platforms like CRM tools and accounting software but also extends to less formal sources such as spreadsheets, email attachments, and shared folders in Google Drive or Dropbox. Don’t forget about data from IoT devices, sensors, and telemetry. A 2025 MIT study highlighted that 95% of enterprise generative AI projects failed to impact profit and loss due to poor integration and lack of preparation. Identifying all data sources upfront is key to avoiding such pitfalls.

1.2 Mapping Data Ownership and Storage Locations

Once you’ve identified your data sources, the next step is mapping ownership. Assign a data steward to each domain to ensure accountability. This person will be responsible for maintaining data quality, documenting access levels, and ensuring compliance. Additionally, document where your data is stored - whether on-premises, in the cloud (e.g. AWS, Azure), or with third-party providers. Record who has access to each dataset, how often it’s updated, and the protocols for handling it. For example, in 2025, real estate data provider LPC collaborated with RTS Labs to unify siloed datasets, including inventory, demand, and sales data. By streamlining their data processes and assigning clear ownership, LPC achieved a 19% boost in market share and reduced data onboarding time by 70%.

1.3 Assessing Data Readiness

After identifying sources and mapping ownership, evaluate whether your datasets are ready for AI. This involves assessing structure, accessibility, integration, and compliance with regulations like GDPR and the Data Protection Act 2018. Classify datasets as ready, partially ready, or not ready. Poorly organised data can lead to inaccurate AI outputs, delayed integration, and increased legal risks. While over 75% of businesses use AI in some way, fewer than 20% have the foundational data practices required to scale effectively. Conducting a thorough audit will help you identify and address gaps before investing in AI tools.

2. Data Quality Assessment

Once you've catalogued your data sources, the next step is to determine if the data is suitable for AI use. Poor-quality data can drain 15–25% of operating budgets, and for UK SMEs, this is often a real issue. Over time, data tends to accumulate inconsistently, with gaps and errors that only become obvious when you try to use it systematically. This assessment is the foundation for creating the strong data infrastructure necessary for reliable AI implementation.

"AI is only as good as the data it learns from - if the data is inaccurate, incomplete, outdated, or scattered across disconnected systems, even the most advanced AI produces misleading results." - An Ethics and Bias Mitigation Framework, Syniti

To evaluate your data, focus on four key dimensions: completeness, accuracy, consistency, and timeliness. Each of these factors plays a critical role in determining whether your datasets can effectively train and operate AI systems. A structured evaluation process will help you identify where improvements are needed before moving forward.

2.1 Checking Completeness and Accuracy

Start by assessing completeness. Calculate the percentage of non-null values for critical fields. For example, if 40% of email addresses in your CRM are missing, this is a major issue. Missing data in essential fields can significantly harm model performance. Prioritise filling these gaps, focusing on fields most relevant to your AI goals.

For accuracy, cross-check your data against your "source-of-truth" systems. For instance, if your accounting software records a transaction at £5,000 but your ERP shows £5,500, you'll need to determine which system is correct and resolve the discrepancy. Automated business rules can help flag these inconsistencies, catching errors that often result from manual data entry.

2.2 Ensuring Consistency and Standardisation

Once you've addressed completeness and accuracy, the next step is to ensure consistency. This means aligning formats and semantics across datasets. For example, if your CRM records dates as "07/04/2026", your ERP uses "2026-04-07", and your spreadsheets display "7th April 2026", AI systems may struggle to interpret patterns. Standardise formats, such as using ISO 8601 for dates (YYYY-MM-DD), international formats for phone numbers (+44), and Royal Mail PAF standards for addresses.

Consistency also extends to semantics. For example, if your sales team defines an "active customer" as someone who purchased within six months, but your finance team uses a 12-month definition, your AI system will receive conflicting information. Create shared definitions for key terms and document them in a centralised glossary accessible to all teams. This ensures that training and real-world data use the same definitions, avoiding silent errors in AI predictions.

2.3 Measuring Data Timeliness

For AI applications like demand forecasting or fraud detection, data must be updated frequently - ideally within 24–48 hours. Stale data leads to "model drift", where AI accuracy declines as patterns in the data shift over time. Set up automated alerts to notify your team when data ingestion falls behind schedule.

Assign a Data Owner for each domain to ensure data remains fresh. For example, your Head of Sales might oversee daily CRM updates, while your Operations Manager ensures inventory data is synchronised. For real-time AI applications like dynamic pricing, you'll need streaming pipelines capable of delivering data with sub-second latency. Define specific timeliness standards for each AI project and monitor compliance proactively.

Data Quality Dimension | Evaluation Method | SME Goal |

|---|---|---|

Completeness | Percentage of populated vs. required fields | Minimise null values in critical features |

Accuracy | Cross-reference with source-of-truth systems | Eliminate errors in manual data entry |

Consistency | Automated schema validation and formatting checks | Uniform date, currency, and contact formats |

Timeliness | Automated staleness-detection alerts | Data updates aligned with operational needs (e.g., <24–48h) |

3. Data Accessibility and Integration

Your AI systems are only as effective as the data they can access. Unfortunately, many UK SMEs discover that their CRM, ERP, and accounting platforms operate in isolation, making it difficult to share information. Whether you can deploy AI quickly or get bogged down in months of custom development often comes down to integration readiness. The key is to determine which systems support modern integration methods - like REST APIs, webhooks, or ETL tools - and which require manual workarounds that could delay implementation. Start by testing how well your key systems can integrate.

3.1 Testing API and Integration Capabilities

Begin by evaluating whether your core systems offer open APIs. Check your CRM, ERP, and accounting platforms for developer documentation that explains how to use API keys, webhooks, or integration marketplaces. If the documentation is clear and allows you to generate access tokens, you're off to a good start. On the other hand, if you're stuck with batch data exports (like weekly CSV files), you’ll find it challenging to implement real-time AI solutions for tasks like dynamic pricing or fraud detection.

Investigate whether your systems support real-time data flows or are limited to batch processing. Webhooks, for example, enable systems to push updates automatically when events occur, eliminating the need for your AI to constantly check for changes. If you're dealing with older ERP systems using outdated protocols like OPC-UA, you might need to invest in modernisation to ensure both functionality and security.

Integration Component | Assessment Action | Readiness Indicator |

|---|---|---|

API Availability | Check for "Open APIs" in CRM/ERP | API keys and documentation available |

Data Flow | Review real-time vs. batch capabilities | Webhooks or streaming supported |

Security | Assess access control measures | MFA and role-based permissions active |

Compatibility | Test connections with simple tools | Successful sync with Google Sheets/Slack |

Scalability | Check cloud storage and API limits | Handles increased API call volume |

Once you've confirmed integration capabilities, turn your attention to securing data access across platforms.

3.2 Reviewing Access Controls and Compliance

Even the most advanced systems need to be secure and compliant. Check if your platforms support role-based permissions, ensuring AI tools only access the data they truly need. For example, a chatbot handling customer queries should never have access to sensitive payroll information. Regularly audit these permissions to avoid unintended access escalation.

Ensure your systems support multi-factor authentication (MFA) and modern protocols like OAuth or API keys with expiration dates. Many SMEs still overlook basic security measures, but these are critical. If your AI vendor processes data outside the UK or EEA, confirm they hold recognised security certifications like SOC 2 or ISO 27001 and have clear data residency policies in line with UK GDPR.

Remember, as the data controller, your SME is legally responsible for compliance, even when working with third-party AI tools. Document your lawful basis for processing (e.g., Legitimate Interest or Legal Obligation) in a Record of Processing Activities (ROPA). Additionally, execute a Data Processing Agreement (DPA) with every AI vendor to meet Article 28 of the UK GDPR. If your AI system handles "special category data" like health records or biometric information, a Data Protection Impact Assessment (DPIA) is mandatory before deployment.

3.3 Ensuring Compatibility with SME Tools

After confirming security, check if your systems align with the tools your SME already uses. Many UK SMEs depend on affordable platforms like Google Workspace, Microsoft 365, Xero, or CRMs such as HubSpot or Pipedrive. Test whether your AI tool integrates smoothly with these systems. For instance, try connecting it to Google Sheets using a public API or an automation tool like Zapier. If the data syncs consistently, you've confirmed basic compatibility.

Next, map your top five to ten business processes to see how data currently moves between tools. Identify where manual workarounds are being used and prioritise systems with open APIs or pre-built connectors. Without these, you could face costly custom development that eats into your AI budget before you even start training your models.

4. Data Governance and Security

Once you've integrated your data effectively, the next step is ensuring it’s governed and secured properly. Many UK SMEs assume this means hiring compliance officers or creating lengthy manuals. But instead, you can opt for a simpler framework. This framework should clarify who is responsible for specific data, how it’s managed, and what checks are in place to catch issues early. Without this, you risk falling into the same trap as 73% of UK and EU organisations, where AI tools are used without proper policies, leading to compliance failures and costly mistakes.

4.1 Implementing Data Governance Policies

Start small by creating a one-page "Acceptable Use" policy. This should outline which AI tools are approved, what data can or cannot be used, and who is in charge of specific data areas. Assign domain experts to act as data stewards - for example, the sales manager could oversee CRM data, while the finance lead manages accounting data. Document your decision-making processes in a simple Decisions Register. This decentralised approach works well for SMEs and avoids the need for hiring extra staff.

Your policy must also account for human-in-the-loop requirements under UK GDPR Article 22. If your AI system makes decisions that have legal or significant consequences - like screening CVs, approving loans, or setting prices - a qualified person must review those decisions before they’re final. Additionally, maintain a straightforward data inventory, such as a shared document, that lists what data you collect, where it’s stored, and who has access. This "Data Treasure Map" helps prevent unauthorised AI use and simplifies audits.

"AI governance for a small business is not a 200-page policy manual... It is a proportionate set of controls that match your size, your risk, and your regulatory environment." - LogiSam

Once the policies are in place, focus on access controls and retention practices. Use the principle of least privilege, ensuring AI tools only access the data they need. For example, a chatbot handling customer enquiries shouldn’t have access to payroll data. Implement role-based access controls (RBAC) and require two-factor authentication (2FA) for all systems. Set up data retention rules with automatic deletion schedules - for instance, payroll records must be kept for three years under UK law, but there’s no need to store them indefinitely.

4.2 Establishing Feedback Loops

Data governance isn’t a one-time task; it needs regular review to catch problems early. Create a tiered review schedule: a quick 15-minute check each month, a more detailed 60-minute review every quarter, and a half-day audit once a year. This keeps the process manageable without overwhelming your team.

Integrate automated quality checks into your data pipelines to flag issues like missing values, out-of-range data, or mismatched formats before they affect your AI models. Poor data quality costs mid-sized organisations around €528,000 annually due to model failures and retraining, so catching errors early can save a lot of money. Pair these technical checks with quarterly staff surveys to ensure employees understand the AI policy and feel comfortable reporting near-misses or unauthorised tool use. This is particularly important as 32% of UK workers admit to using AI tools without their employer’s knowledge, which poses hidden compliance risks.

These feedback mechanisms not only improve data quality but also prepare your business to meet GDPR standards.

4.3 Ensuring GDPR Compliance and Scalability

As the data controller, your SME is legally responsible for compliance, even when using third-party AI tools. Before deploying any AI system, document a valid lawful basis for processing data. For example, Legitimate Interest might apply for B2B marketing or fraud prevention, Contract for payroll or invoicing, and Legal Obligation for HMRC reporting. If you handle "special category data" like health records or biometric information, you’ll need a Data Protection Impact Assessment (DPIA).

Sign a Data Processing Agreement (DPA) with each AI vendor to meet Article 28 requirements. Check that vendors have recognised security certifications like SOC 2 or ISO 27001, and confirm where data is processed. If it’s outside the UK or EEA, ensure appropriate safeguards are in place. Update your privacy notices to clearly state your use of AI tools, explain why data is processed, and outline how individuals can request human review or opt out.

The Data (Use and Access) Act 2025 (DUAA) has eased some rules on automated decision-making, as long as decisions don’t involve special category data and include meaningful human oversight. However, penalties remain significant, with the ICO able to fine up to £17.5 million or 4% of global turnover. By 19 June 2026, you’ll also need a clear complaints process that responds to data concerns within 30 days. Finally, conduct a data provenance audit to track the source and legal basis for all training data. This protects your business from future compliance risks as regulations evolve.

5. Technical Infrastructure Requirements

With solid data governance in place, the next step is ensuring your technical infrastructure can effectively support AI operations. Here's some good news: as of 2026, 80% of SMEs are adopting a cloud-first approach. This means you can skip the hefty upfront costs of purchasing hardware. For instance, cloud GPUs can be rented for as little as £0.40 per hour, compared to spending £40,000 on equivalent hardware[1]. This shift makes AI accessible to businesses of all sizes, but selecting the right infrastructure is key to success. A strong technical foundation also complements the data readiness practices discussed earlier.

5.1 Cloud and Compute Readiness

Start by adopting a serverless-first architecture with platforms like AWS Lambda, Google Cloud Run, or Azure Functions. When training AI models, opt for transient compute instances that can be shut down immediately after use. This approach is ideal for SMEs, as it avoids the cost of maintaining underutilised, always-on clusters.

To keep track of spending, tag all cloud resources by project. For non-production resources, schedule automatic shutdowns outside of working hours. For deploying models, use managed hosting services such as AWS SageMaker, Google Vertex AI, or Azure ML, which offer automatic scaling to meet demand.

Once your compute resources are streamlined, the next priority is ensuring you have scalable storage.

5.2 Storage Scalability

Your storage solution needs to handle both structured data (e.g., spreadsheets, databases) and unstructured data (e.g., images, videos, documents). For raw data and model files, object storage options like AWS S3, Google Cloud Storage, or Azure Blob are excellent choices due to their affordability, durability, and compatibility.

For analytical queries, pair this with a managed data warehouse like BigQuery, Snowflake, or Redshift Serverless. These services operate on a pay-per-query basis, so you only pay for what you use, avoiding upfront costs.

The growing preference for SQL-first feature engineering means many SMEs are moving away from complex feature stores. Instead, they rely on materialised feature tables within their data warehouses, simplifying operations and consolidating data management.

5.3 Building Basic MLOps Practices

To ensure reproducibility, implement version control with tools like Git, DVC (Data Version Control), or MLflow. These tools help you track data and model versions, maintaining a clear record of what was used during training. Incorporate checks for data quality and performance monitoring into your DevOps pipeline.

Use Docker containers to standardise deployment environments, ensuring consistent model behaviour from development to production. Set up automated monitoring that focuses on business KPIs, such as prediction accuracy and error rates. Instead of retraining models on a fixed schedule, trigger retraining only when performance declines. Include automated rollback features to revert to previous models if new ones underperform.

For SMEs, basic MLOps practices typically cost between £2,000 and £5,000 per month for cloud infrastructure and tools[2]. However, this investment can drastically reduce model deployment timelines - from weeks to just hours.

MLOps Component | SME Implementation Strategy | Recommended Tools |

|---|---|---|

Versioning | Track code, data, and model artifacts together | DVC, MLflow, Git |

Deployment | Use containers for environment consistency | Docker, Kubernetes |

Monitoring | Focus on business KPIs and data drift | AWS CloudWatch, Azure Monitor |

Automation | Trigger retraining based on performance dips | Apache Airflow, GitHub Actions |

6. Next Steps for SMEs

Turn the insights from your checklist into practical actions. Focus on improvements that offer the greatest strategic value and are technically feasible.

6.1 Prioritising Gaps and Creating a Roadmap

Start by categorising gaps based on their strategic importance and how easy they are to address. Tackle high-priority issues first. For instance, if your data quality needs improvement but you already have the tools to fix it, prioritise this before diving into more advanced MLOps practices. Always give precedence to data governance and security.

To make this process manageable, break your roadmap into three clear phases: Foundation, Implementation, and Scale.

Foundation (Months 1–2): Begin with a comprehensive data audit, map out your existing processes, and evaluate your team's readiness to adopt AI.

Implementation (Months 3–4): Launch a low-risk pilot project, such as automating a single workflow. Keep a close eye on ROI and invest in staff training during this phase.

Scale (Months 5–6): Expand AI adoption across departments, embed it into daily operations, and set up schedules for retraining your models.

Here's a quick guide to prioritising actions based on impact and feasibility:

Prioritisation Category | Strategic Impact | Technical Feasibility | Recommended Action |

|---|---|---|---|

Accelerate to MVP | High | High | Immediate investment |

Incubate | Low | High | Test prototypes |

Research | High | Low | Monitor for future |

Shelve | Low | Low | Do not pursue |

Once your roadmap is in place, consider seeking expert help to speed up your progress.

6.2 Leveraging AgentimiseAI Services

For many SMEs, navigating AI adoption without a clear plan can be daunting. That’s where AgentimiseAI comes in. Their Discovery Workshop (£1,050) is a focused, half-day session designed to identify high-ROI opportunities tailored to your business. By the end of the workshop, you'll have a concrete action plan and know which infrastructure gaps to address first - perfect for kicking off the Foundation phase of your roadmap.

AgentimiseAI also offers Leadership Training (£1,800 when bundled with the Discovery Workshop) for up to 10 non-technical leaders. This training equips your leadership team with the knowledge they need to understand AI's role in your operations and guide its adoption effectively through the Implementation and Scale phases. The bundle includes a free AI policy template to help you get started. These services are available across the UK with straightforward, fixed pricing.

Conclusion: Achieving AI Readiness for Long-Term Success

Getting your data infrastructure ready for AI isn’t a one-off task - it’s a continuous effort that sets the stage for lasting results. The checklist you’ve been following touches on the key areas: understanding your data, ensuring its accuracy, making it accessible, implementing proper governance, and building a technical foundation that can scale. Each step is a move towards AI systems that genuinely enhance your business.

Rushing into AI without proper management can lead to compliance headaches and security risks. By focusing on governance and infrastructure early, you’re not just preparing for AI - you’re safeguarding your business against the pitfalls of unregulated adoption while setting yourself up to unlock real benefits. The urgency is clear: solid data practices are essential for successful AI deployment. Consider this - small and medium-sized businesses (SMBs) report a median first-year net ROI of 180% from AI transformation, but only when the groundwork is done right.

Begin with the fundamentals: improve data quality, enforce governance policies, and secure your systems before diving into complex AI projects. Your approach should be practical and actionable. Start with improvements that are both impactful and achievable, then expand gradually.

For SMEs unsure where to start, AgentimiseAI offers a Discovery Workshop tailored to identify the most promising opportunities for your business. Paired with their Leadership Training, these services provide both strategic guidance and hands-on knowledge to help you adopt AI with confidence. Designed specifically for non-technical leaders across the UK, these offerings come with clear pricing and no hidden fees.

Success with AI doesn’t depend on having the largest budget - it depends on being prepared and taking decisive steps. By addressing each item on your checklist, you’re setting your SME up for secure and effective AI integration. Now that the groundwork is laid, it’s time to put your plans into action.

FAQs

What data should we fix first before starting AI?

Before diving into AI, it's crucial to take a close look at your data and assess its readiness. This means ensuring your data is in good shape - clean, well-structured, and fit for use in AI projects. Here are the key aspects to focus on:

Data quality: Is the information you have accurate, consistent, and reliable? Poor data can lead to poor outcomes.

Governance: Do you have proper policies and processes in place to manage your data? Clear rules make handling data much smoother.

Security: Is your data secure from breaches or unauthorised access? Protection is non-negotiable.

Architecture: Does your current infrastructure have the strength to support AI applications? A solid foundation is essential.

By addressing these areas, you'll set the stage for successful AI implementation.

Do we need a data warehouse to use AI?

A data warehouse isn't an absolute must for using AI, but it can make a big difference in how effective your AI efforts are. It ensures your data is well-organised, of high quality, and readily accessible - all critical ingredients for successful AI applications. For small and medium-sized enterprises (SMEs), there are other options too, like vector- or semantic-enabled platforms, which provide scalable and flexible solutions. While you can manage without one, having a data warehouse is strongly advised if you're aiming to scale AI effectively in environments that rely heavily on data.

When does an SME need a DPIA for AI?

Small and medium-sized enterprises (SMEs) must conduct a Data Protection Impact Assessment (DPIA) when their AI systems make automated decisions that could have legal or similarly significant effects on individuals. This requirement is particularly relevant under UK GDPR, especially when human oversight isn't enough to reduce potential risks.

The focus here is on scenarios involving the processing of personal data, where compliance with Article 22 of the UK GDPR becomes essential. A DPIA is a critical tool to spot and address these risks, helping businesses stay within regulatory boundaries while safeguarding individuals' rights.